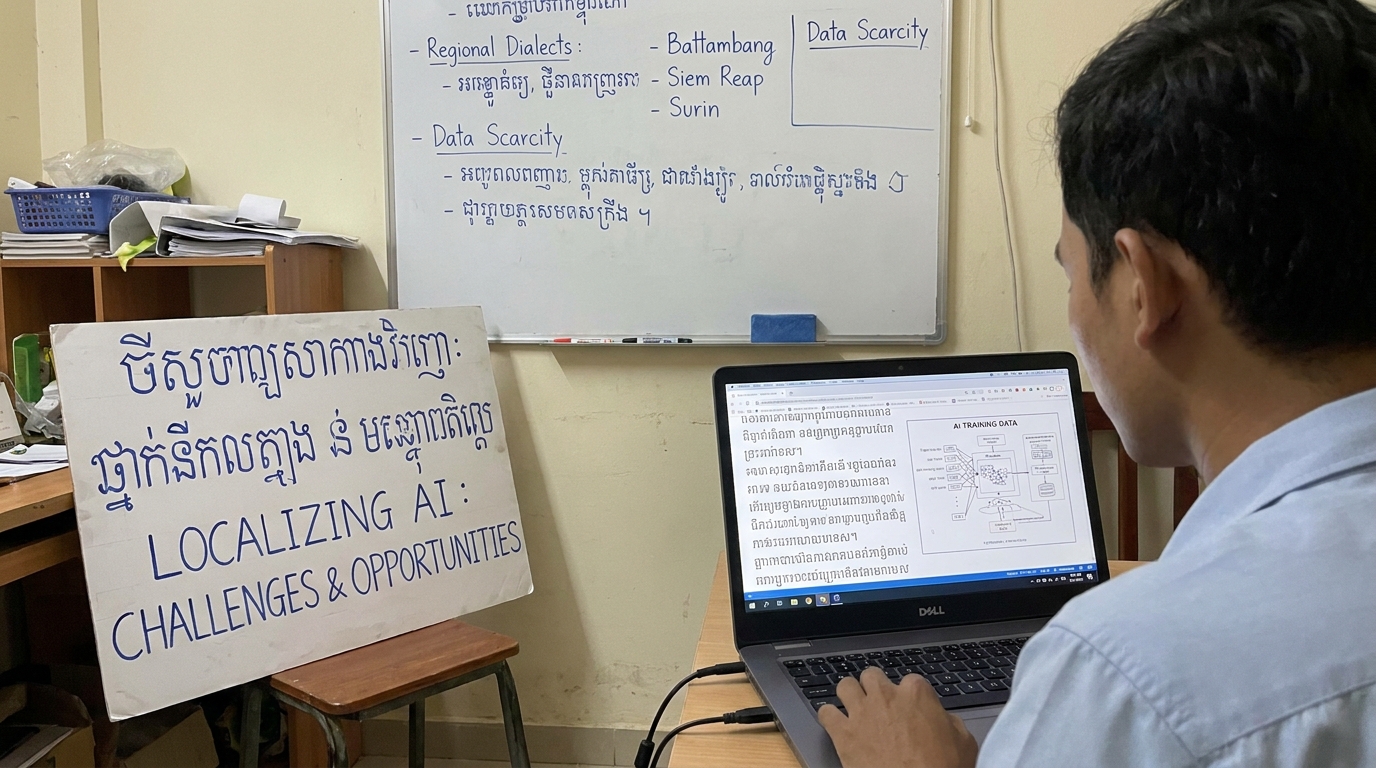

Localizing AI: The Challenges of Training Models on Khmer and Regional Dialects

Localizing AI: Explore the technical and resource-based challenges of training AI models on Khmer and regional dialects. Learn about data scarcity, script complexity, and breakthrough solutions like the SEA-LION project for Southeast Asian languages.

The Digital Language Gap

Imagine asking a chatbot a question in your native language and receiving a response that makes no sense—or no response at all. For millions of Cambodians, this is not a hypothetical frustration. It is daily reality.

Most artificial intelligence tools today “understand” only global languages like English or Chinese. That leaves speakers of Khmer and countless other regional dialects excluded from the AI revolution .

This is not a minor inconvenience. It is a digital divide with real consequences. Farmers cannot access AI-powered agricultural advice. Students cannot use AI tutors. Small business owners cannot leverage automated translation or customer service chatbots. The promise of AI remains out of reach for entire linguistic communities.

Why is this happening? And what are researchers and technologists doing to fix it?

This article explores the specific challenges of training AI models on Khmer and other low-resource regional dialects—and the innovative solutions emerging to bridge this gap.

What Makes a Language “Low-Resource” for AI?

Before diving into Khmer specifically, it helps to understand what AI researchers mean by “low-resource languages.”

High-resource languages like English, Mandarin Chinese, and Spanish have massive digital footprints. Billions of web pages, millions of books, countless hours of transcribed speech, and extensive labeled datasets are available to train AI models .

Low-resource languages lack these essential ingredients. They are typically spoken by smaller populations or underrepresented communities and often lack standardized digital representation .

According to research published in IEEE Xplore, the primary challenges in low-resource language processing include:

| Challenge | Description |

|---|---|

| Annotated data scarcity | Insufficient labeled datasets for supervised machine learning |

| Missing linguistic resources | Lack of sentiment lexicons, part-of-speech taggers, and morphological analyzers |

| Structural complexity | Rich morphology, agglutination, and dialectal variation |

| Domain adaptation issues | Models trained on limited data fail to generalize to new contexts |

| Computational costs | Developing language-specific models remains expensive and expertise-intensive |

The motivation to address these challenges goes beyond technical curiosity. As researchers note, improving low-resource language processing “contributed to cultural preservation, especially for endangered languages at risk of digital destruction” and supports “social equity, information accessibility, and broader democratization of AI in global contexts” .

Challenge 1: The Data Scarcity Problem

The most fundamental obstacle for Khmer AI is simple: not enough data.

Unlike English, which has billions of digitally available sentences, Khmer content online remains relatively sparse. Much of what does exist is stored in inaccessible formats. “Many Khmer texts are stored in scanned PDFs or images, not in clean digital formats,” making them unusable for AI training .

This data scarcity affects every type of AI model:

For Large Language Models (LLMs): Training requires millions or billions of sentences. Khmer simply does not have that volume of clean, digitized text available.

For Automatic Speech Recognition (ASR): Models need thousands of hours of transcribed speech. The publicly available Khmer speech datasets remain modest in size. One dataset released in early 2026 contains just 37.62 hours of curated speech-text pairs—a fraction of what high-resource languages have .

For dialect identification: Regional variations within Khmer compound the problem. Each dialect would theoretically require its own labeled data, but even standard Khmer data remains scarce.

A Mozilla Data Collective Khmer ASR dataset published in January 2026 represents meaningful progress—37.62 hours of manually curated speech covering Cambodian cultural topics like gift-giving and recipes . However, this remains orders of magnitude smaller than datasets for English or Mandarin.

Challenge 2: The Script Without Spaces

Khmer presents a unique technical hurdle that English-based AI models never encounter.

Khmer words are written without spaces between them .

For human readers who know the language, this is natural. For computers, it is a nightmare.

Most AI language models rely on word boundaries to understand text structure. They learn that certain sequences of characters form meaningful units (words) and that spaces separate these units. Without spaces, the model cannot easily determine where one word ends and another begins.

This is not a minor preprocessing issue. It fundamentally affects how tokenization—the process of breaking text into manageable pieces—works for Khmer.

Researchers from a 2025 study on transformer-based Khmer ASR explicitly identified this as one of three major challenges, along with “the lack of language resources (text and speech corpora) in digital form” and that “the pronunciation model is not well studied” .

To work around this, researchers have experimented with using characters rather than words as the basic unit for model training. While this avoids the word segmentation problem, characters carry less semantic meaning than whole words, potentially reducing model performance.

Challenge 3: The Pronunciation Research Gap

Even when text data exists, speech recognition for Khmer faces another obstacle.

The pronunciation model for Khmer is not well studied .

This matters because automatic speech recognition (ASR) systems need to map sounds to text. They need to understand how different phonemes (distinct units of sound) combine to form words, and how these patterns vary across speakers and contexts.

For well-studied languages like English, decades of linguistic research have produced detailed pronunciation models. For Khmer, this foundational work remains incomplete.

Researchers have attempted to bypass this limitation by using state-of-the-art “end-to-end” transformer models that learn directly from speech-text pairs without requiring explicit pronunciation rules . This approach has shown promise but still requires substantial amounts of paired training data.

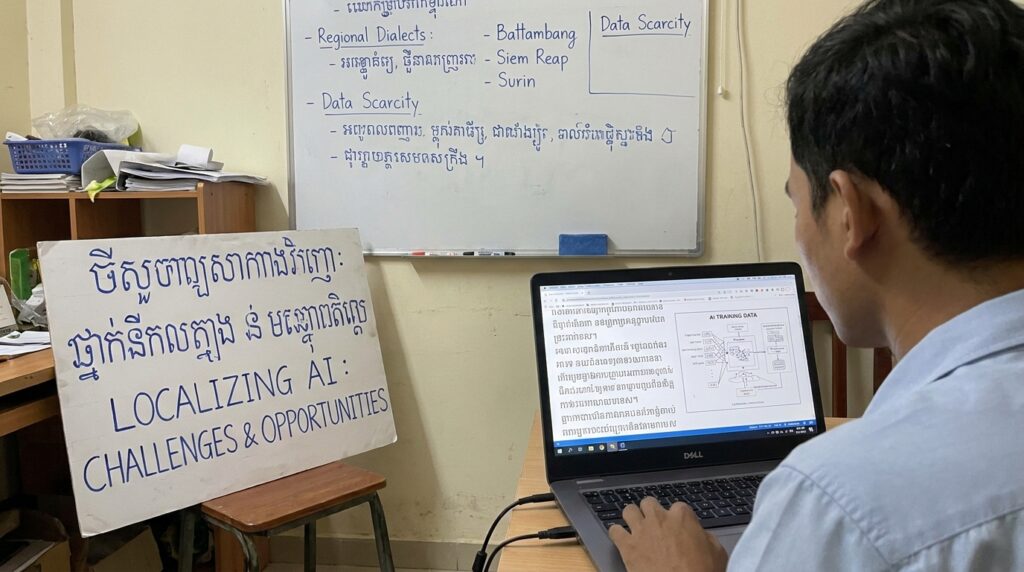

Challenge 4: Dialectal Diversity Within Languages

Khmer is not monolithic. Neither are most regional languages.

Arabic provides a well-documented example of this challenge. Modern Standard Arabic (MSA) is the formal, written standard, but daily conversation happens in dozens of regional dialects that differ significantly in vocabulary, pronunciation, and grammar .

Consider this example from Arabic dialect research: The sentence “How do you pronounce the name of this place?” is expressed differently across Rabat, Cairo, Khartoum, and Sana’a—yet shares a single form in MSA .

The same phenomenon exists in Khmer, with variations between Phnom Penh, Battambang, Siem Reap, and other regions. These differences, while natural to human speakers, confuse AI models trained primarily on standard forms of the language.

Research on Arabic dialect identification demonstrates the scale of this problem. Even state-of-the-art pre-trained language models—trained specifically for Arabic—struggle with dialectal data. The high degree of similarity among dialects makes it difficult to learn accurate dialect-specific representations without massive volumes of labeled data .

For low-resource African languages like Igbo (spoken by over 30 million people in Nigeria), researchers have documented similar challenges. Existing NLP systems trained primarily on Standard Igbo “often fail to recognize dialect-specific lexical and phonological patterns, leading to degraded performance for speakers of non-standard dialects” .

The proposed solution? Dialect-aware frameworks that can identify dialects in both text and speech, then adapt system behavior accordingly. For Igbo, this includes transformer-based classifiers for text and wav2vec/XLS-R embeddings for speech, plus an “adaptive routing mechanism” that selects dialect-specific models for different system components .

The Current Solutions: How Researchers Are Fighting Back

Despite these formidable challenges, progress is happening. Researchers are developing creative solutions that could serve as models for Khmer and other low-resource languages.

Multilingual Transfer Learning

One promising approach is “multilingual training.” Instead of trying to build a Khmer model from scratch (with limited Khmer data), researchers train a single model on multiple related languages simultaneously .

The logic is simple: Languages share patterns. A model that learns from Thai, Lao, and Vietnamese might develop representations that transfer effectively to Khmer. The shared linguistic features—similar grammatical structures, common etymological roots, comparable phonetic inventories—provide a foundation that purely Khmer training cannot.

A 2025 study on transformer-based Khmer ASR demonstrated that “the proposed multi-lingual transformer-based end-to-end model can achieve significant improvement compared to the DNN-HMM baseline model” . In plain English: training on multiple languages together produced better Khmer performance than training only on Khmer.

Self-Training with Unlabeled Data

Labeled data is expensive to create. Unlabeled data is everywhere.

Researchers working on Arabic dialects developed a “self-training neural approach” that leverages unlabeled data to learn “dialectal indicators”—tokens that frequently co-occur in similar contexts across dialects .

The approach achieved impressive results: gains of up to 36.2% in accuracy and 11.52% in macro-F1-score compared to direct fine-tuning of pre-trained language models .

This matters for Khmer because the same principle applies. Large amounts of unlabeled Khmer text are easier to collect than labeled examples. If models can learn meaningful dialectal patterns from unlabeled data, the annotation bottleneck becomes less severe.

The SEA-LION Initiative

Perhaps the most significant development for Khmer AI is the SEA-LION (Southeast Asian Languages In One Network) project.

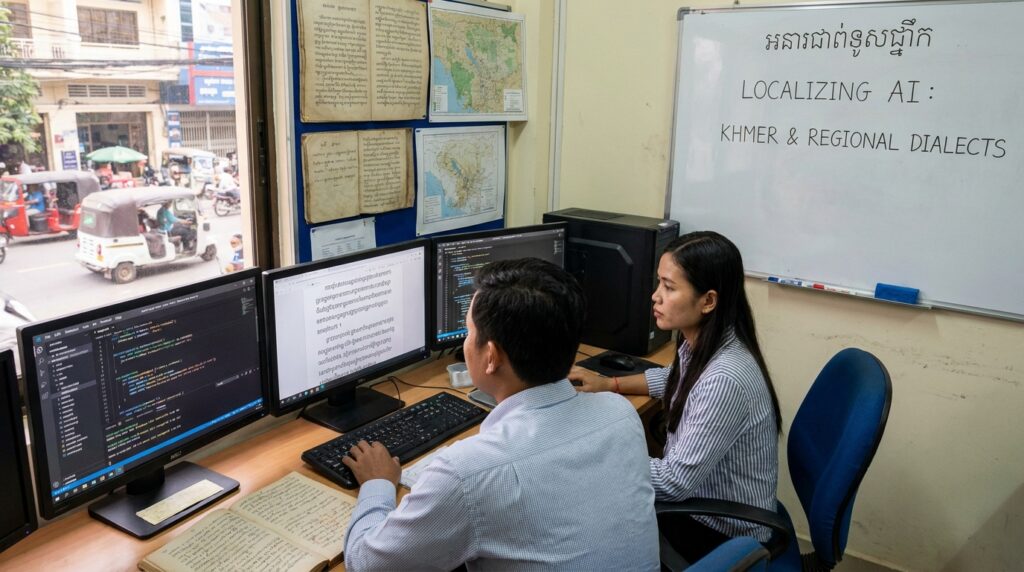

In January 2025, AI Forum Cambodia and AI Singapore signed a Memorandum of Understanding to build the first Khmer-language Large Language Model as part of the SEA-LION regional initiative .

The goal is to create an open-source Khmer AI model that “anyone, government, schools, startups, or NGOs, can use freely” .

The project uses a shared model called SEA LION 7B, with earlier versions already launched for other Southeast Asian languages. Cambodian researchers and engineers are receiving training, and technical work is adapting the model for Khmer script. A demo was expected before the end of 2025, with code and data available for public use free of charge .

This is not just a technical project. It is an inclusivity project. As the Khmer Times article notes, the goal is to ensure “Cambodians are not just users of global technology, but creators too” .

Case Study: Dogri—A Blueprint for Low-Resource Language Processing

The experience of another low-resource language, Dogri, offers lessons for Khmer AI development.

Dogri is spoken in parts of India and Pakistan but lacked standardized linguistic resources. Researchers undertook an end-to-end pipeline to develop the first deep learning-powered morphological analyzer for the language .

Their approach:

- Corpus construction: Manually annotating 290,361 Dogri words with morpheme boundaries and grammatical features (gender, number, case, tense)

- Model architecture: Using Bi-LSTM (Bidirectional Long Short-Term Memory) networks trained on character embeddings

- Labeling strategy: Testing both “monolithic” (single label for all features) and “individual feature” representation

The results were impressive. The individual feature representation technique achieved high accuracy for nouns (98.03%), adjectives (96.75%), adverbs (95.41%), pronouns (82.39%), and verbs (80.01%) .

The key takeaway for Khmer: With systematic annotation effort and appropriate model architecture, even languages with minimal existing resources can achieve high-performance NLP.

The Road Ahead: What Needs to Happen

Bridging the Khmer AI gap requires coordinated effort across multiple fronts.

Data Collection and Curation

The most urgent need is more data—and the right kind of data.

Text data requires digitizing existing Khmer content, including newspapers, books, and government documents. Speech data requires recording and transcribing hours of natural conversation across different regions, age groups, and contexts.

The Mozilla dataset is a start, but 37 hours is not enough. The SEA-LION project aims to collect additional data from “newspaper archives, subtitles from TV shows, or even public reports” . These efforts need scaling.

Technical Innovation

Researchers must continue developing architectures that work well with limited data. Promising directions include:

- Character-level models that avoid word segmentation problems

- Multilingual pre-training that leverages related languages

- Self-supervised learning that extracts patterns from unlabeled data

- Dialect-aware architectures that adapt to regional variation

Community and Policy Support

Technology alone cannot solve this problem. Institutional support matters.

As the UNESCO recommendation cited in the Khmer Times article notes, “teachers and students should use AI as a support tool, not as a source of truth” . But this requires that AI tools exist in Khmer in the first place.

Government policies supporting open data, academic research funding, and public-private partnerships like the Cambodia-Singapore collaboration are essential.

Practical Implications: Why This Matters

The stakes here are not abstract. They affect real people.

Education: Khmer-speaking students cannot access AI tutoring, personalized learning, or automated feedback in their native language. Teachers cannot generate Khmer-language teaching materials efficiently.

Healthcare: Medical AI assistants, symptom checkers, and health information systems remain inaccessible to Khmer speakers.

Agriculture: Farmers cannot use AI for weather prediction, pest identification, or market price analysis in Khmer.

Commerce: Small businesses cannot leverage AI-powered customer service, translation, or marketing tools.

Government: Digital services cannot reach all citizens equally when they operate only in global languages.

As one researcher put it, “By enabling NLP for low-resource languages facilitated cross-cultural sentiment analysis, disaster response and social media monitoring in diverse linguistic regions” . The benefits extend beyond convenience to inclusion and equity.

Frequently Asked Questions

Q: Why can’t we just use Google Translate for Khmer?

A: Google Translate exists for Khmer, but its quality lags far behind high-resource languages because of the same data and technical challenges discussed in this article. It works for basic phrases but struggles with nuance, dialect, and complex sentences.

Q: How long until we have good Khmer AI models?

A: The SEA-LION project expects a demo before the end of 2025 . However, achieving parity with English-language models will likely take years of sustained data collection and model refinement.

Q: Is Khmer uniquely difficult for AI?

A: No. Khmer shares challenges with many low-resource languages, particularly those using non-Latin scripts without explicit word boundaries. However, each language has unique characteristics that require tailored solutions.

Q: Can’t we just use English models and translate?

A: Translation adds latency, cost, and error. More importantly, it fails to capture cultural context and local knowledge that only native-language AI can provide.

Q: Is anyone working on Khmer dialects specifically?

A: Current efforts focus on standard Khmer. Dialect-specific work remains a frontier area, though techniques developed for Arabic and Igbo dialects could be adapted.

Conclusion: From Exclusion to Inclusion

The challenges of training AI on Khmer and regional dialects are real and substantial. Data scarcity, script complexity, pronunciation research gaps, and dialectal diversity create a steep technical mountain to climb.

But the mountain is being climbed.

Multilingual models are demonstrating that related languages can bootstrap each other. Self-training approaches are extracting value from unlabeled data. Regional initiatives like SEA-LION are building open-source Khmer LLMs. Researchers worldwide are developing architectures specifically designed for low-resource languages.

The goal is not just technical. It is social. It is ensuring that the AI revolution does not leave linguistic communities behind—that a farmer in Battambang can ask a question in Khmer and receive a useful answer, that a student in Phnom Penh can learn with AI tools in their native language, that digital transformation includes everyone.

As the Dogri research demonstrates, systematic effort yields results. As the SEA-LION project shows, regional collaboration accelerates progress. And as the Arabic dialect work proves, innovative architectures can overcome data limitations.

The Khmer language has survived centuries of change. It will not be left behind by artificial intelligence—not if researchers, technologists, and communities continue working together to build the models, collect the data, and share the knowledge required to bring AI to every language, every dialect, every speaker.