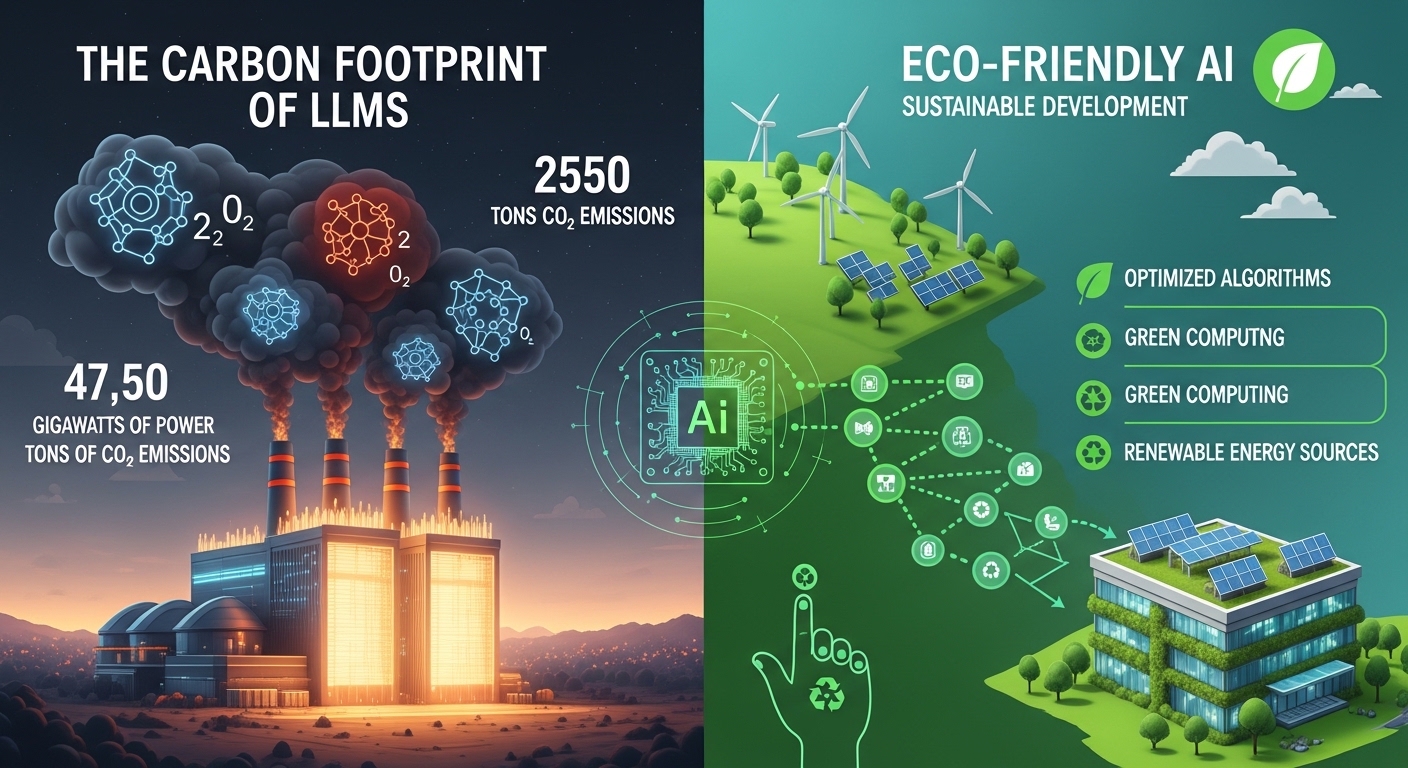

Eco-Friendly AI: How Developers are Reducing the Carbon Footprint of LLMs

Eco-Friendly AI: Discover how developers are reducing the carbon footprint of LLMs through model compression, lifecycle transparency, and green inference strategies. Learn from Moshi, Mistral, and PEARL frameworks.

The Hidden Cost of Every Prompt

Every time you ask ChatGPT a question, energy is consumed. Not just in the data center, but across the entire lifecycle of the model—from the GPU manufacturing to the research experiments that never saw the light of day.

For years, the AI industry has focused on a narrow metric: the carbon cost of the final training run. The narrative was simple: train a model once, deploy it widely. The math seemed to justify the investment.

But that narrative is dangerously incomplete.

Recent research has pulled back the curtain on the true environmental footprint of large language models—and the numbers are sobering. A single large language model can generate 493 metric tons of carbon emissions, equivalent to powering 98 US homes for a full year, while consuming 2.77 million liters of water—about 24.5 years of water usage for an average American . Mistral AI’s Large 2 model, after 18 months of operation, had generated 20.4 kilotons of CO₂ and consumed 281,000 cubic meters of water .

But here is the critical insight: up to 50% of these emissions come not from training, but from the research and development process . Failed experiments, debugging runs, ablation studies, and hardware manufacturing—the invisible iceberg beneath the visible tip of the final training run.

This guide explores how developers are fighting back. From model compression techniques that reduce energy consumption by over 90% to green inference strategies that can cut per-request energy by 100x, the tools for eco-friendly AI are here. The question is whether the industry will adopt them.

The Transparency Problem: What We Don’t Know

Before we can reduce AI’s carbon footprint, we must measure it. The industry’s lack of transparency has been a persistent barrier .

The Moshi Foundation Model Case Study

In April 2026, researchers published the first fine-grained analysis of the compute spent creating a multi-modal LLM—the Moshi 7B-parameter speech-text foundation model developed by Kyutai, a leading open science AI lab .

What made this study unique: Instead of only reporting the final training run, the researchers dove into the “anatomy” of compute-intensive research, quantifying GPU time invested in:

- Early experimental stages

- Failed training runs

- Debugging and validation

- Ablation studies

- Specific model components

The actionable finding: The study provides concrete guidelines to reduce compute usage and environmental impacts in MLLM research—paving the way for more sustainable AI development .

The Development vs. Training Gap

A 2025 ICLR paper delivered a wake-up call to the industry. When researchers accounted for hardware manufacturing, model development, and final training runs for a series of models ranging from 20 million to 13 billion parameters, they found that model development amounted to ~50% of the impact of training .

The key takeaway: Reporting only final training emissions, as most model developers currently do, hides half the story. The research and experimentation phase—the failed runs, the dead ends, the debugging—carries a substantial environmental cost that is rarely disclosed.

Mistral’s Lifecycle Analysis

Mistral AI, partnering with sustainability consultancy Carbone 4 and the French ecological transition agency, conducted a comprehensive lifecycle analysis of its Large 2 model . The findings, peer-reviewed by Resilio and Hubblo, confirmed that 85.5% of total GHG emissions and 91% of water consumption occurred during model development and user interaction .

Per-query impact: A single 400-token response from Mistral’s “Le Chat” chatbot generates approximately 1.14 grams of CO₂ and consumes 45 milliliters of water—roughly equivalent to watching a 10-second streaming video in the US .

Mistral’s call to action is clear: greater transparency across the AI industry is essential to steer the sector toward alignment with global climate objectives .

The Compression Toolkit: Smaller Models, Smaller Footprints

The most direct path to reducing AI’s carbon footprint is making models smaller without sacrificing performance.

Quantization: 10x Faster, 6x Less Carbon

Quantization reduces numerical precision—moving from 32-bit floating point numbers to 8-bit or even 4-bit representations. The energy savings are dramatic.

A 2024 study from Nanyang Technological University evaluated QLoRA (Quantized Low-Rank Adaptation) with FP4 precision and FP16 compute type. The results:

| Metric | Baseline | QLoRA-Optimized | Improvement |

|---|---|---|---|

| Accuracy | 98.58% | 98.60% | Slightly better |

| Carbon footprint (20K samples) | 0.0095 kg CO₂ | 0.0016 kg CO₂ | ~6x reduction |

| Inference time | 0.016 seconds | 0.0059 seconds | ~10x faster |

The key insight: Near-zero accuracy degradation with dramatically improved efficiency. The most optimized model was 10 times faster and produced nearly 6 times less carbon emissions over 20,000 samples .

Pruning and Distillation: Removing the Unnecessary

A comprehensive GitHub study compared compression techniques on transformer models including BERT, DistilBERT, ALBERT, and ELECTRA .

The results table (excerpt):

| Model | Accuracy | Energy Consumption (kWh) | CO₂ Emissions (kg) |

|---|---|---|---|

| BERT Baseline | 96.15% | 7.197 | 3.366 |

| BERT w/ Pruning & Distillation | 95.90% | 4.887 | 2.270 |

| DistilBERT Baseline | 95.88% | 3.364 | 1.563 |

| TinyBERT | 95.15% | 0.629 | 0.292 |

The takeaway: TinyBERT achieved 95.15% accuracy—less than one percentage point below BERT baseline—while consuming only 8.7% of the energy (0.629 kWh vs. 7.197 kWh). That is a 91.3% reduction in energy consumption with minimal accuracy trade-off .

Choosing the Right Compression Technique

The study evaluated three primary techniques :

| Technique | How It Works | Best For |

|---|---|---|

| Quantization | Reduces numerical precision (FP32 → INT8/FP4) | Inference optimization; near-zero accuracy loss |

| Pruning | Removes unimportant weights or neurons | Reducing model size with moderate accuracy retention |

| Distillation | Small “student” model learns from large “teacher” | Highest accuracy retention but requires training |

The recommendation: QLoRA with FP4 quantization achieved the best balance—98.60% accuracy with a 6x reduction in carbon emissions and 10x faster inference .

Smart Serving: The Inference Optimization Frontier

Even with a compressed model, how you serve it matters enormously. A January 2026 study revealed that system-level design choices can lead to orders-of-magnitude differences in energy consumption for the same model .

The Quantization Caveat

Lower-precision formats only yield energy gains in compute-bound regimes—not universally. The study, which performed detailed empirical analysis on NVIDIA H100 GPUs, found that quantization benefits depend heavily on the specific workload and hardware configuration .

Key finding: Batching improves energy efficiency, especially in memory-bound phases like decoding (the token-by-token generation phase of LLM inference). By grouping multiple requests together, the GPU spends less time idle and more time productively computing.

The 100x Breakthrough: Structured Request Timing

The most striking finding: structured request timing (arrival shaping) can reduce per-request energy by up to 100 times .

How it works: Instead of processing requests as they arrive randomly (which creates inefficient bursty workloads), the system shapes request arrivals into a predictable pattern. This allows the GPU to operate in its most efficient regime continuously, dramatically reducing the energy cost per inference.

The implication: Sustainable LLM deployment depends not only on model internals but also on the orchestration of the serving stack. Phase-aware energy profiling and system-level optimizations are essential for greener AI services .

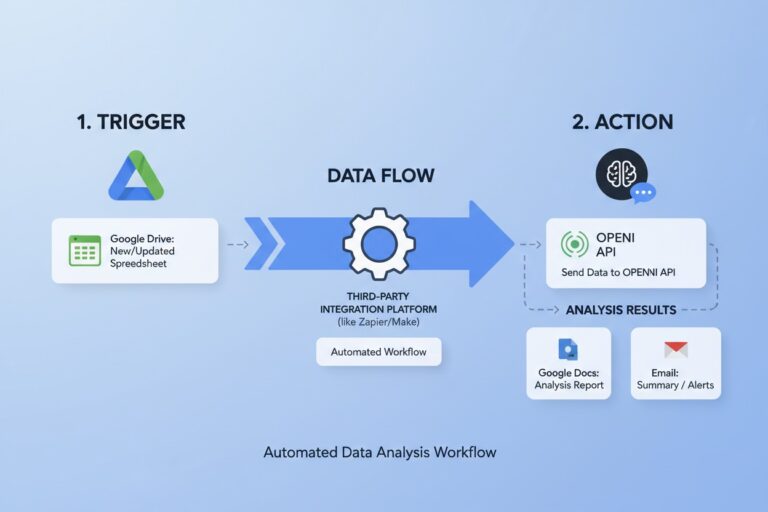

PEARL: Routing Queries to the Right Model

The PEARL framework (Performance and Energy Aware Routing for LLMs) introduces a novel approach: intelligently routing each query to the most appropriate LLM based on its complexity .

How PEARL works:

- A lightweight proxy model assesses the complexity of each incoming query

- The system predicts both performance and energy consumption for multiple candidate LLMs

- Decision-makers configure an energy budget for inference

- PEARL assigns queries to the most accurate LLM that stays within the budget

Results: PEARL reduces energy consumption by over 18% on benchmark datasets while maintaining or improving accuracy compared to using the largest model for every query .

The broader implication: Organizations can deploy a suite of models—from small, efficient ones to large, powerful ones—and let PEARL route queries appropriately. Simple queries go to small models; complex ones escalate to larger models. This “good enough” approach dramatically reduces average energy per query without sacrificing user experience.

ELLIE: Edge-Optimized Inference

For edge deployments (on-device AI on PCs and mobile devices), the ELLIE framework splits LLM inference into its two distinct phases:

| Phase | Characteristics | Best Processing Unit |

|---|---|---|

| Prefill | Highly parallel, compute-intensive | GPU or NPU |

| Decode | Sequential, memory-intensive | CPU or NPU |

ELLIE dynamically selects the optimal device mapping (CPU, GPU, or NPU) for each phase based on the specific prompt, hardware configuration, and user requirements (latency vs. energy vs. Energy-Delay Product) .

The insight: Static mapping to a single processing unit is suboptimal. By splitting the two phases across different hardware, ELLIE achieves better energy efficiency and EDP than traditional approaches.

Green Data Centers: Cooling and Power Infrastructure

Model optimization is only half the equation. The physical infrastructure running these models matters just as much.

Waterless Cooling: The ZutaCore Solution

Traditional data center cooling consumes enormous amounts of water and energy. ZutaCore’s HyperCool technology offers a different path: two-phase, direct-to-chip cooling that operates without water .

The specs:

- Deployed in more than 40 sites worldwide (Equinix, SoftBank, University of Münster)

- Compatible with high-performance systems (NVIDIA HGX B300 server)

- Supports higher processor densities than traditional cooling

- Enables energy recovery and reuse initiatives

The partnership: ZutaCore has partnered with EGIL Wings to deploy a 15MW AI Vault as the first phase of a global rollout across North America, Europe, and Asia. The system integrates EGIL Wings’ microgrid and renewable energy solutions with ZutaCore’s waterless cooling .

“AI data centres are becoming part of the energy factories of the future. Together, we’re building a new generation of AI energy community ecosystems—faster to deploy, more efficient, and inherently sustainable.”

— Erez Freibach, Co-founder and CEO, ZutaCore

Chindata’s Integrated Architecture

Chindata Group’s AI Data Center Total Solution NEXT represents a comprehensive approach to green AI infrastructure .

Key components:

| System | Capability |

|---|---|

| X-Power | 800-volt high-voltage DC architecture; supports 12 kW to 150 kW per rack; integrates layered energy storage |

| X-Cooling | Air-cooled, liquid-cooled, and hybrid configurations; supports 200+ kW per rack; dynamically optimizes performance |

| “Kunpeng” Platform | AI-powered intelligent operations; automated energy optimization; fault localization; alarm handling |

The results: Modular building design improves on-site installation efficiency by approximately 50% . The architecture incorporates renewable power, IT-grade energy storage, and wastewater recovery systems .

The “Energy Factory” Vision

The emerging paradigm is the AI data center as an energy factory—not just consuming power but integrated with renewable generation, energy storage, and heat recovery. The ZutaCore and Chindata approaches represent different paths to the same destination: AI infrastructure that is sustainable by design, not as an afterthought.

The Measurement Imperative: What Gets Measured Gets Managed

Before implementing any of these techniques, organizations must measure their current footprint.

Carbon Tracker for LLMs

A GitHub-hosted tool called Carbon Tracker for LLMs (ChatGPT Carbon Tracker) allows users to:

- Track real-time carbon emissions during LLM inference

- Estimate cumulative environmental impact

- Make informed decisions about model deployment

The Green Metrics That Matter

| Metric | Why It Matters |

|---|---|

| Energy per inference (kWh) | Direct operational cost and carbon impact |

| Water consumption (liters) | Often overlooked but significant for data center location |

| Hardware manufacturing footprint | The “embodied carbon” of GPUs and servers |

| Research/development overhead | The 50% of emissions most organizations ignore |

The Call for Standardization

Mistral’s report, the Moshi study, and the ICLR paper all converge on the same conclusion: the industry needs standardized environmental reporting. Without transparency, developers cannot make informed trade-offs, and regulators cannot set meaningful standards.

Implementation Roadmap for Organizations

Based on current research and industry deployments, here is a practical framework for reducing LLM carbon footprint.

Phase 1: Measure Your Baseline (Week 1-2)

- Deploy Carbon Tracker or similar monitoring

- Document energy consumption per inference for all deployed models

- Calculate total weekly/monthly footprint

Phase 2: Optimize the Model (Week 3-6)

- Apply quantization (QLoRA with FP4) to production models

- Evaluate accuracy impact (typically <1% degradation)

- Measure energy reduction (target: 6x improvement)

Phase 3: Optimize Serving (Week 7-10)

- Implement batching for inference workloads

- Explore structured request timing for high-volume services

- Deploy PEARL-style routing for multi-model environments

Phase 4: Optimize Infrastructure (Month 3-6)

- Evaluate data center PUE (Power Usage Effectiveness)

- Consider waterless cooling for new deployments

- Integrate renewable energy sources where feasible

Phase 5: Measure, Report, Iterate (Ongoing)

- Publish environmental impact metrics

- Set reduction targets

- Share learnings with the community

The Future of Green AI

By 2028, expect three major shifts:

1. Regulatory Mandates. The EU AI Act and emerging US frameworks will likely require environmental impact reporting for high-impact AI systems.

2. Carbon-Aware Training. Model training will shift to times and locations with the lowest grid carbon intensity—”follow the sun” for renewable energy.

3. Efficiency as a Differentiator. Just as latency and accuracy are competitive metrics today, energy-per-inference will become a key differentiator for AI services.

Frequently Asked Questions

Q: How much carbon does a single ChatGPT query produce?

A: Approximately 1.14 grams of CO₂ per 400-token response (Mistral benchmark). This varies significantly based on model size, data center efficiency, and grid carbon intensity .

Q: Does quantization affect model quality?

A: Modern quantization techniques (QLoRA with FP4) achieve near-zero accuracy degradation—98.60% accuracy vs. 98.58% baseline in one study .

Q: Can I run compressed models on consumer hardware?

A: Yes. TinyBERT runs efficiently on CPUs and edge devices, consuming only 0.629 kWh vs. 7.197 kWh for BERT baseline .

Q: Why don’t companies report development emissions?

A: Transparency remains a challenge. Most model developers disclose only final training emissions. The Moshi study is a notable exception .

Q: What is the single most effective reduction technique?

A: For inference, structured request timing can reduce per-request energy by up to 100x . For model size, quantization with QLoRA achieves 6x reduction with minimal accuracy loss .

Conclusion: The Path to Net-Zero AI

The environmental impact of LLMs is real, substantial, and growing. But the tools to address it are already here. Quantization, pruning, distillation, smart routing, green cooling, and transparent measurement form a comprehensive toolkit for sustainable AI development.

The industry is moving toward transparency—Mistral’s lifecycle analysis, the Moshi study, and the ICLR paper are early signs of a broader shift. The technical solutions are proven. The regulatory pressure is building. And the cost of inaction—in carbon, water, and ultimately, public trust—is too high to ignore.