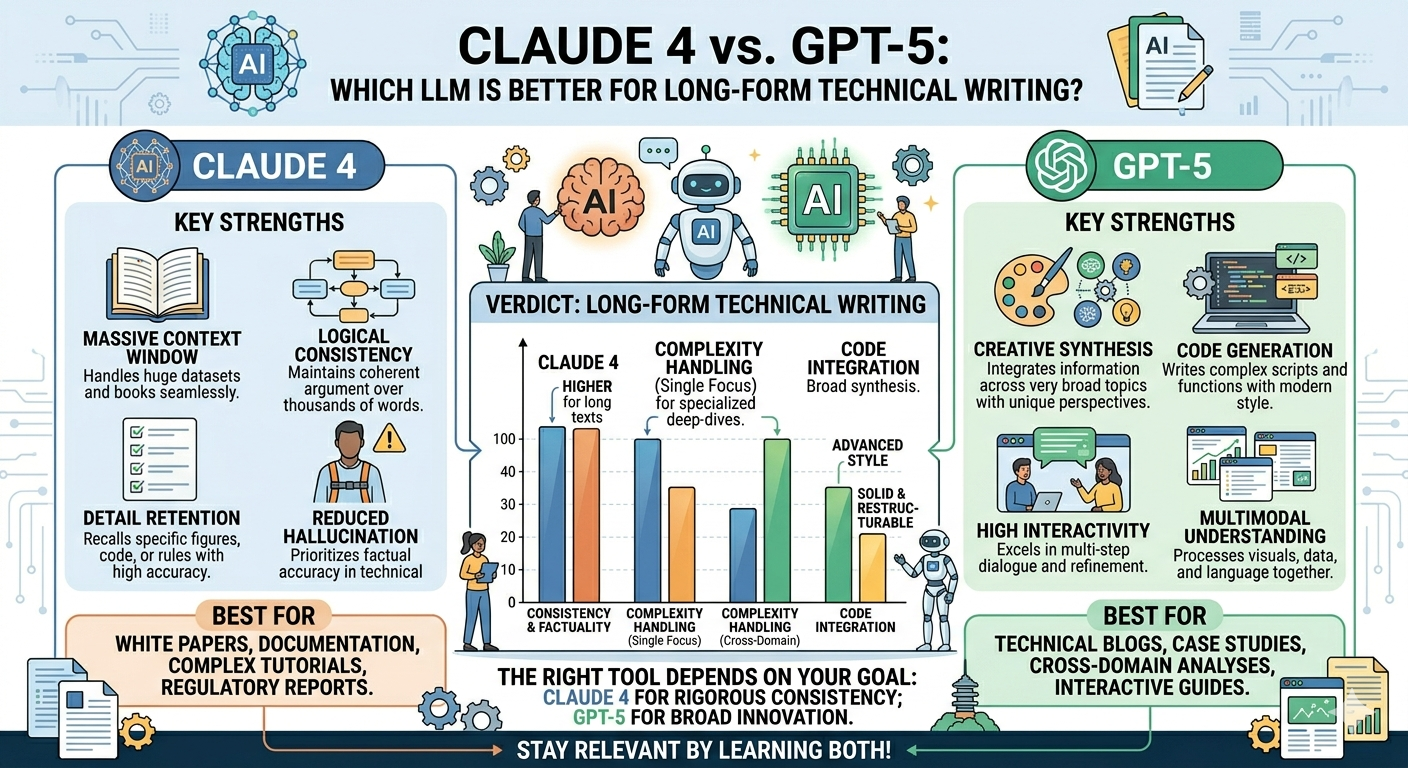

Claude 4 vs. GPT-5: Which LLM is Better for Long-Form Technical Writing?

Claude 4 vs. GPT-5: Which LLM is Better for Long-Form Technical Writing? The choice between Claude 4 and GPT-5 has become one of the most debated decisions for technical writers, developers, and content professionals in 2026. Both models represent the pinnacle of large language model development, but they excel in fundamentally different ways.

If you’ve ever spent hours refining a technical document only to find the tone inconsistent, or struggled to maintain narrative flow across a 50-page architecture guide, you’ve experienced the limitations of using the wrong tool for the job. This comprehensive comparison will help you understand which model—Claude 4 or GPT-5—better serves the unique demands of long-form technical writing.

The Short Answer: Choose Based on Your Priority

Before diving into the details, here’s the bottom line:

Choose Claude 4 (particularly Opus 4.6) if:

- Narrative flow and readability are your top priorities

- You’re producing client-ready documentation, whitepapers, or educational content

- You need consistent tone across hundreds of pages

- You prefer giving high-level guidance rather than micromanaging prompts

Choose GPT-5 if:

- Structured reasoning and technical accuracy are paramount

- You’re writing code-heavy documentation or API references

- You work with iterative, multi-step workflows

- You’re comfortable crafting detailed, structured prompts

Understanding the Contenders

Claude 4 Series

Anthropic’s Claude 4 family, released in early 2026, represents a significant evolution in AI-assisted writing. The flagship Claude Opus 4.6 is designed for complex, sustained reasoning tasks, while Claude Sonnet 4 balances speed with capability .

Claude’s defining characteristic is its focus on narrative coherence. Unlike models that treat each response as an isolated output, Claude maintains context and tone across extended interactions, making it particularly suited for long-form content where consistency matters.

Key Specifications:

- Context window: Up to 1 million tokens (beta) for Opus 4.6—enough to process entire book-length documents

- Output style: Polished, natural-flowing text with smooth narrative transitions

- Pricing: $5 per million input tokens, $25 per million output tokens

GPT-5

OpenAI’s GPT-5, launched in December 2025, represents a unification of the company’s GPT and reasoning model lines. It’s built for structured reasoning, coding proficiency, and factual accuracy .

GPT-5 excels at tasks that require precise, repeatable outputs. Its response to carefully structured prompts is predictable and reliable, making it ideal for technical documentation that follows strict formatting requirements.

Key Specifications:

- Context window: 400,000 tokens

- Output style: Concise, structured, format-adherent

- Pricing: $1.75 per million input tokens, $14 per million output tokens

Head-to-Head Comparison for Technical Writing

1. Narrative Flow and Readability

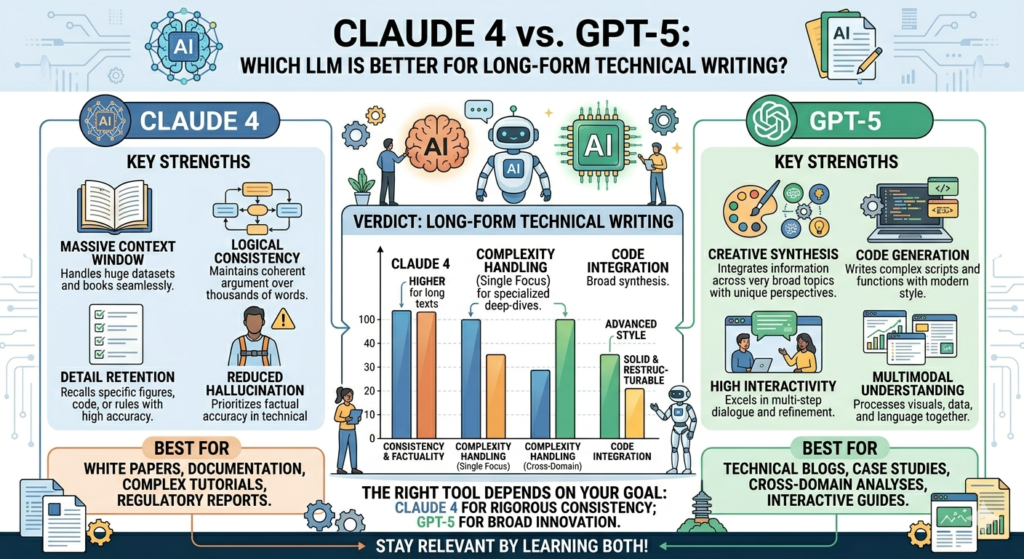

Claude 4 Wins

This is where Claude distinguishes itself most clearly. For long-form technical writing, narrative flow isn’t a luxury—it’s essential for reader comprehension and engagement.

Claude 4 produces text that reads as though it was written by a skilled human technical writer. Its outputs feature:

- Smooth transitions between sections and concepts

- Natural sentence variety that avoids the repetitive structures common in AI-generated text

- Context-aware explanations that build understanding progressively

- Tone consistency maintained across thousands of words

GPT-5, while technically accurate, tends to produce text that feels more mechanical. Its writing is functional rather than engaging—perfect for reference documentation where clarity trumps style, but less suited for content that needs to hold a reader’s attention across dozens of pages .

Winner: Claude 4

2. Structured Reasoning and Accuracy

GPT-5 Wins

When you need technical precision—especially for documentation involving code, algorithms, or complex logical structures—GPT-5 has the edge.

GPT-5’s architecture incorporates explicit reasoning mechanisms that help it maintain logical consistency across complex explanations. On benchmark tests:

- GPT-5 Thinking shows 78% fewer factual errors compared to previous models

- The model achieves 100% on AIME 2025 (mathematical problem-solving)

- It scores 92.4% on GPQA Diamond (graduate-level reasoning)

Claude 4 is no slouch—it leads in coding-specific benchmarks like Terminal-Bench 2.0 and SWE-Bench Verified—but GPT-5’s structured approach to reasoning makes it more reliable for documentation where technical precision is non-negotiable .

Winner: GPT-5

3. Handling Long Documents

Tie—Different Strengths

Both models offer impressive context windows, but they handle long documents differently.

| Aspect | Claude 4 | GPT-5 |

|---|---|---|

| Maximum context | 1M tokens (beta) | 400K tokens |

| Effective recall | Excellent for narrative continuity | Strong for structured retrieval |

| Mid-document performance | Maintains coherence throughout | May show primacy/recency bias |

Claude’s larger context window (1 million tokens vs. GPT-5’s 400,000) means it can theoretically process entire book-length documents in a single session . However, research shows that all models experience some degradation when working at maximum context lengths—a phenomenon known as “lost in the middle” where information in the middle of long contexts is recalled less accurately .

For practical purposes, Claude is better suited for documents where narrative continuity matters across sections, while GPT-5 excels at structured retrieval from specific sections of long documents.

Winner: Tie

4. Prompting Style and Workflow

Different Approaches for Different Users

How you interact with these models reveals a fundamental difference in their design philosophy.

GPT-5: The Structured Approach

GPT-5 responds best to detailed, structured prompts. The formula that yields the best results is:

Role → Goal → Constraints → Output Format → Example

For example:

“You are a senior technical writer. Create API documentation for a payment processing endpoint. Use Markdown format with the following sections: Overview, Parameters, Request Example, Response Example, Error Codes. Maintain a professional tone. Provide code blocks for all examples.”

Claude 4: The Conversational Approach

Claude performs well with broader, high-level guidance:

High-level goal → Context → Expected tone

For example:

“Create comprehensive documentation for our payment API. The audience is developers integrating our platform. Keep the tone professional but approachable. Focus on making complex concepts easy to understand.”

Claude’s tolerance for ambiguity makes it ideal for writers who prefer to iterate on ideas rather than architect prompts meticulously .

Winner: Depends on your working style—GPT-5 for prompt-crafters, Claude for natural workflow

5. Code Documentation

GPT-5 for Code, Claude for Explanation

Technical writing often involves documenting code, and here the distinction becomes particularly interesting.

GPT-5 generates clean, functional code snippets with proper formatting and structure. It excels at:

- Generating complete code examples with proper syntax

- Maintaining consistency across related code blocks

- Following language-specific conventions

Claude 4, however, produces superior explanations of code. When documenting complex systems, Claude’s ability to explain trade-offs, architectural decisions, and implementation nuances in clear, narrative prose is unmatched .

For a typical API documentation project:

- Use GPT-5 to generate the code examples and parameter specifications

- Use Claude to write the explanatory text that helps developers understand why things work the way they do

Winner: Split—GPT-5 for code generation, Claude for code explanation

6. Creativity and Engagement

Claude 4 Wins

For technical writing that needs to engage readers—tutorials, blog posts, educational content—Claude produces more readable, engaging text.

GPT-5’s creative writing has been described by users as “flatter” than previous versions, with some noting that “poetry feels flatter, philosophical conversations less nuanced, and long-form narratives more mechanical” . This trade-off appears to result from optimizations that improved factual accuracy at the expense of stylistic flair.

Claude maintains a more natural, conversational tone even in technical contexts, making it the better choice when reader engagement matters.

Winner: Claude 4

7. Cost Considerations

GPT-5 Wins on Price

For budget-conscious projects, GPT-5 offers significant advantages:

| Model | Input Cost (per 1M tokens) | Output Cost (per 1M tokens) |

|---|---|---|

| GPT-5 | $1.75 | $14.00 |

| Claude Opus 4.6 | $5.00 | $25.00 |

GPT-5 also offers discounted variants: GPT-5-mini costs $0.25 per million input tokens while retaining ~90% of programming performance .

However, consider that Claude often requires fewer revisions due to its superior narrative flow, potentially offsetting the higher per-token cost .

Winner: GPT-5

Practical Recommendations by Use Case

For API Documentation and Developer Guides

Recommended: GPT-5 with Claude for polish

Start with GPT-5 to generate the structured components—endpoint definitions, parameters, request/response examples. Use Claude to refine the explanatory text and ensure narrative flow.

For Whitepapers and Technical Reports

Recommended: Claude 4

The narrative demands of long-form technical content align perfectly with Claude’s strengths. Its ability to maintain consistent tone and logical flow across dozens of pages makes it the superior choice.

For Tutorials and Educational Content

Recommended: Claude 4

When teaching complex topics, reader engagement matters as much as accuracy. Claude’s natural explanatory style and ability to build understanding progressively make it ideal for educational content.

For Code-First Documentation

Recommended: GPT-5

If your primary output is code examples with minimal explanatory text, GPT-5’s precision and structured output capabilities will serve you better.

For Mixed-Media Technical Content

Recommended: Consider Gemini 3.1 Pro

If your technical writing includes diagrams, screenshots, or video explanations, Google’s Gemini 3.1 Pro offers superior multimodal capabilities that neither Claude nor GPT-5 can match .

The Hybrid Approach: Using Both Models

The most effective technical writers in 2026 aren’t choosing one model—they’re using both strategically.

The Claude-First, GPT-Refined Workflow:

- Use Claude for initial drafting and narrative structure

- Use GPT-5 for fact-checking and technical precision

- Return to Claude for final polish and tone consistency

The GPT-First, Claude-Refined Workflow:

- Use GPT-5 for generating structured components and code examples

- Use Claude to expand explanations and improve readability

- Use GPT-5 for final formatting and consistency checks

This dual-model approach leverages the strengths of each while compensating for their respective weaknesses.

Conclusion: No Universal Winner

After analyzing the evidence across benchmarks, user experiences, and real-world applications, a clear pattern emerges: Claude 4 and GPT-5 serve different purposes in technical writing.

Claude 4 is the better writer. It produces text that flows naturally, maintains consistent tone, and explains complex concepts in accessible ways. If your goal is to create technical content that people actually want to read, Claude is your tool.

GPT-5 is the better technician. It produces precise, structured outputs, handles complex reasoning tasks reliably, and follows detailed instructions with consistency. If your goal is to generate technically accurate documentation with strict formatting requirements, GPT-5 is the superior choice.

The most sophisticated technical writers in 2026 won’t limit themselves to one model. They’ll use Claude for what Claude does best—narrative, explanation, engagement—and GPT-5 for what GPT-5 does best—precision, structure, code. Together, they form a toolkit that exceeds the capabilities of either alone.