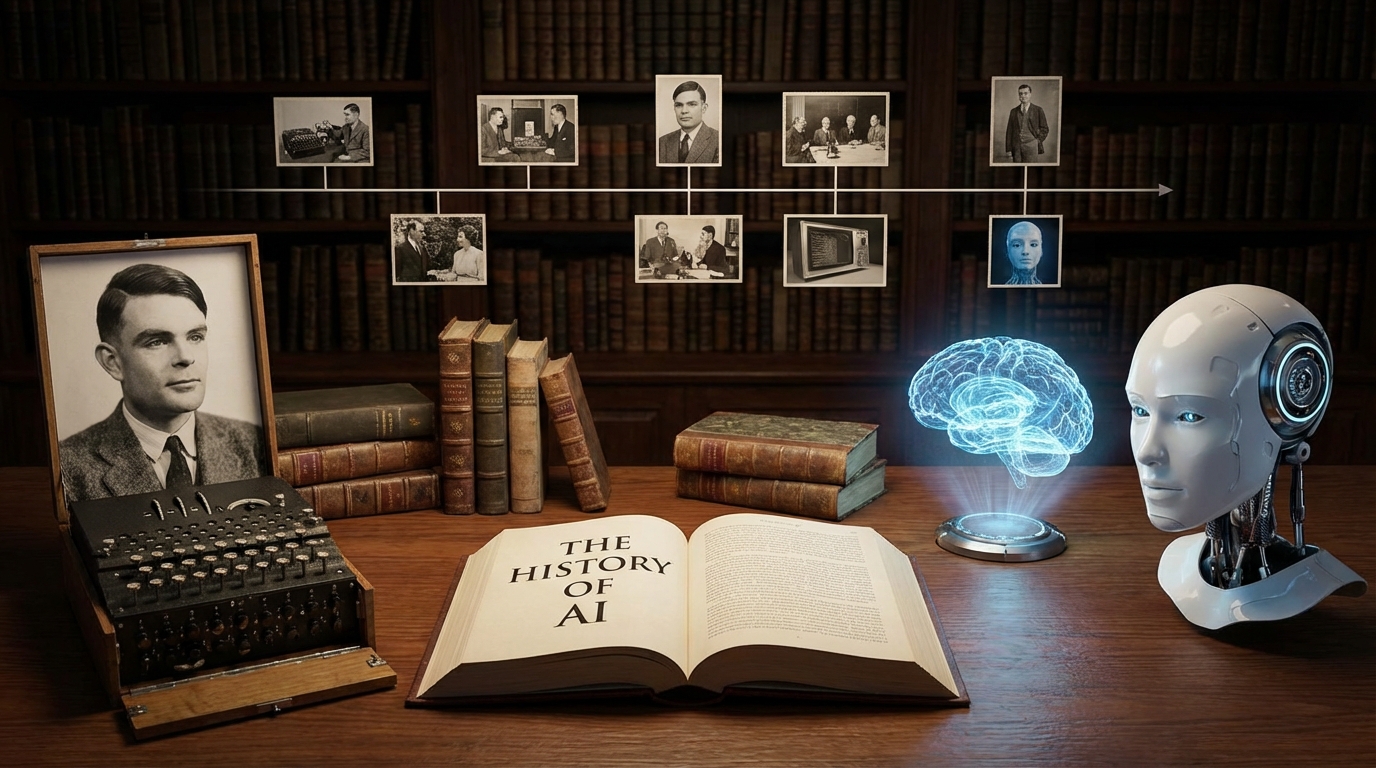

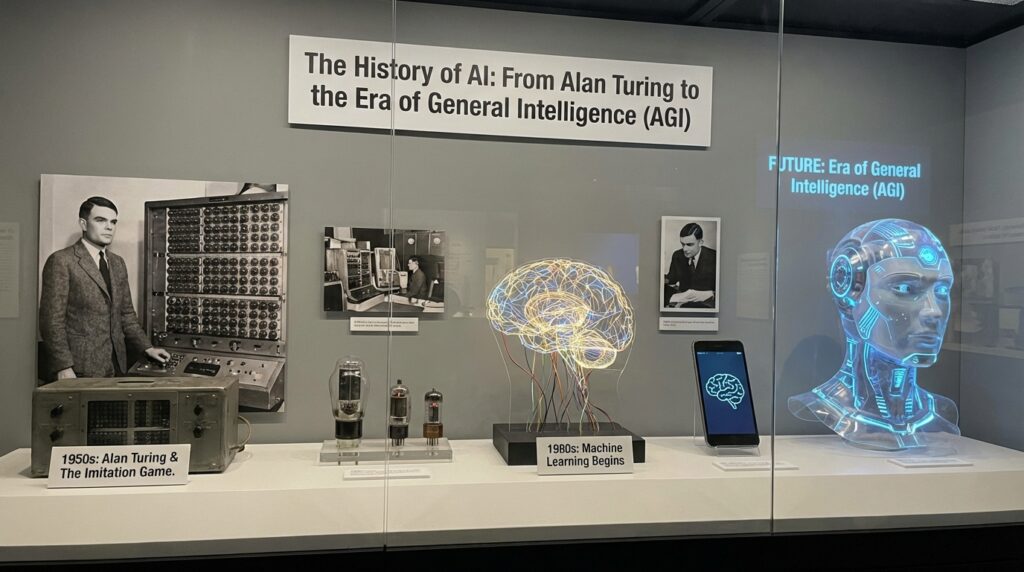

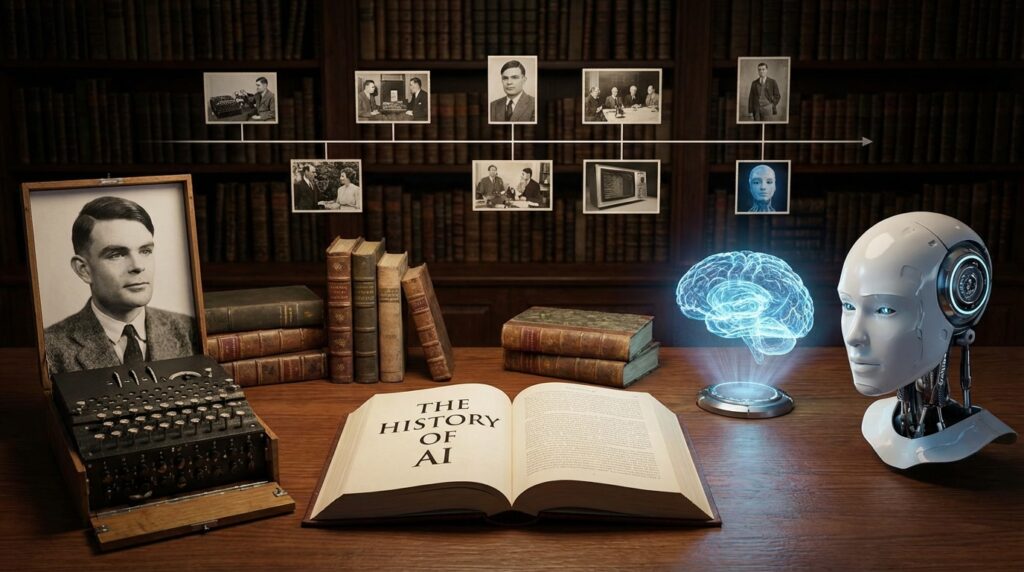

The History of AI: From Alan Turing to the Era of General Intelligence (AGI)

The History of AI: From Alan Turing to the Era of General Intelligence (AGI), Artificial Intelligence (AI) has become one of the most transformative technologies of the 21st century, reshaping industries, economies, and daily life. But AI’s journey began decades ago, rooted in the visionary ideas of pioneers like Alan Turing. This blog traces the fascinating history of AI, from its conceptual origins to the contemporary quest for Artificial General Intelligence (AGI).

Early Foundations: Alan Turing and the Birth of Machine Intelligence

The story of AI begins in the mid-20th century with Alan Turing, a British mathematician and logician often considered the father of computer science and artificial intelligence. In 1936, Turing introduced the concept of the Turing Machine, a theoretical device that could simulate any computation process. This idea laid the groundwork for modern computers.

In 1950, Turing published his seminal paper, “Computing Machinery and Intelligence,” which posed the provocative question: “Can machines think?” To explore this, he proposed the famous Turing Test — a method to determine if a machine’s behavior is indistinguishable from a human’s. This test remains a foundational concept in AI research.

The Dawn of AI Research: 1950s to 1970s

The term “Artificial Intelligence” was officially coined in 1956 at the Dartmouth Conference, organized by John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon. This event marked the birth of AI as a formal academic discipline.

During this period, early AI research focused on symbolic AI or “good old-fashioned AI” (GOFAI), which involved programming computers with explicit rules and logic. Researchers developed programs that could solve mathematical problems, play games like chess, and understand simple language.

Key milestones of this era include:

- Logic Theorist (1956): Developed by Allen Newell and Herbert A. Simon, this was one of the first AI programs capable of proving mathematical theorems.

- ELIZA (1966): Created by Joseph Weizenbaum, ELIZA simulated a Rogerian psychotherapist, demonstrating early natural language processing.

- Shakey the Robot (1966-1972): The first mobile robot to reason about its actions, integrating perception, planning, and movement.

Despite these advances, AI research faced significant challenges. Early optimism gave way to the “AI winter” periods in the 1970s and later, characterized by reduced funding and interest due to unmet expectations.

The Rise of Machine Learning: 1980s to 1990s

The limitations of rule-based AI led researchers to explore alternative approaches. Machine Learning (ML), which enables computers to learn from data rather than relying on explicit programming, gained traction.

During the 1980s, the development of algorithms such as decision trees, neural networks, and genetic algorithms marked significant progress. The backpropagation algorithm, popularized in the mid-1980s, revitalized interest in neural networks by allowing multi-layer networks to be trained effectively.

Notable developments include:

- Expert Systems: AI programs designed to emulate the decision-making ability of human experts, widely used in industries like healthcare and finance.

- Deep Blue (1997): IBM’s chess-playing computer defeated world champion Garry Kasparov, showcasing AI’s potential in complex problem-solving.

The 1990s also saw the emergence of the internet, which dramatically increased the availability of data, setting the stage for modern AI breakthroughs.

The Era of Big Data and Deep Learning: 2000s to 2010s

The 21st century heralded a new era for AI, driven by advances in computing power, massive datasets, and sophisticated algorithms. Deep learning, a subset of machine learning based on artificial neural networks with many layers, became the dominant approach.

Key breakthroughs included:

- ImageNet (2009): The creation of a large-scale image database enabled training of deep neural networks, leading to significant improvements in image recognition.

- AlexNet (2012): This deep convolutional neural network won the ImageNet competition by a large margin, sparking widespread interest in deep learning.

- Natural Language Processing (NLP): Models like word2vec and later transformers revolutionized language understanding and generation.

During this period, AI found applications in voice assistants (Siri, Alexa), autonomous vehicles, facial recognition, and recommendation systems, impacting everyday life.

The Quest for Artificial General Intelligence (AGI)

While current AI systems excel at specific tasks — known as narrow AI — the ultimate goal remains the development of Artificial General Intelligence (AGI). AGI refers to machines that possess the ability to understand, learn, and apply intelligence across a broad range of tasks at a human-like level.

The pursuit of AGI involves overcoming significant challenges:

- Transfer Learning: Enabling AI to apply knowledge learned in one domain to different, previously unseen tasks.

- Reasoning and Common Sense: Developing AI that can understand context, nuance, and abstract concepts beyond pattern recognition.

- Autonomy and Creativity: Creating machines capable of independent decision-making and innovative problem-solving.

Prominent organizations such as OpenAI, DeepMind, and academic institutions are actively researching AGI, combining approaches from neuroscience, cognitive science, and computer science.

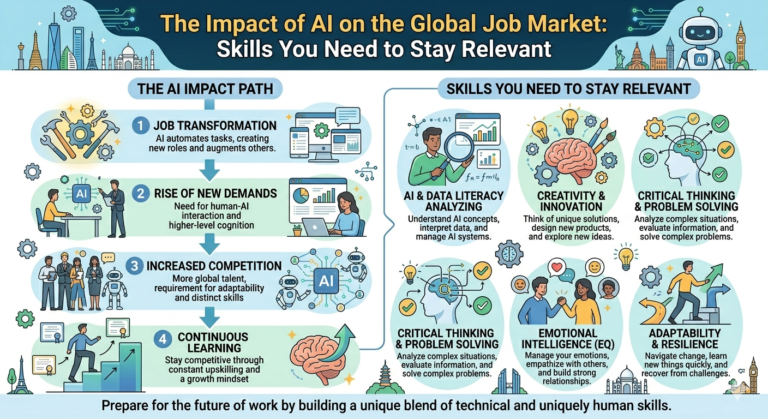

Ethical and Societal Implications

As AI technologies advance towards AGI, ethical considerations become increasingly critical. Issues include:

- Bias and Fairness: Ensuring AI systems do not perpetuate social biases.

- Privacy: Protecting user data in AI-driven applications.

- Job Displacement: Addressing the impact of automation on employment.

- Control and Safety: Ensuring AGI aligns with human values and does not pose risks.

Governments, industry leaders, and ethicists emphasize responsible AI development to maximize benefits while mitigating risks.

Conclusion

From Alan Turing’s visionary ideas in the 1940s to today’s cutting-edge research on AGI, the history of artificial intelligence is a testament to human ingenuity and the relentless quest for knowledge. As we stand on the brink of potentially revolutionary advancements, understanding AI’s past helps us navigate its future thoughtfully and responsibly.

The evolution of AI continues to accelerate, promising innovations that could transform society in profound ways. Whether AGI will be achieved soon or remains a distant goal, the journey of AI remains one of the most exciting stories in technology and science.

SEO Keywords: History of AI, Alan Turing, Artificial Intelligence timeline, AI pioneers, AI winter, Machine Learning history, Deep Learning breakthroughs, Artificial General Intelligence, AGI development, AI ethics, future of AI