Sustainable AI: The Environmental Impact of Global Data Centers in 2026

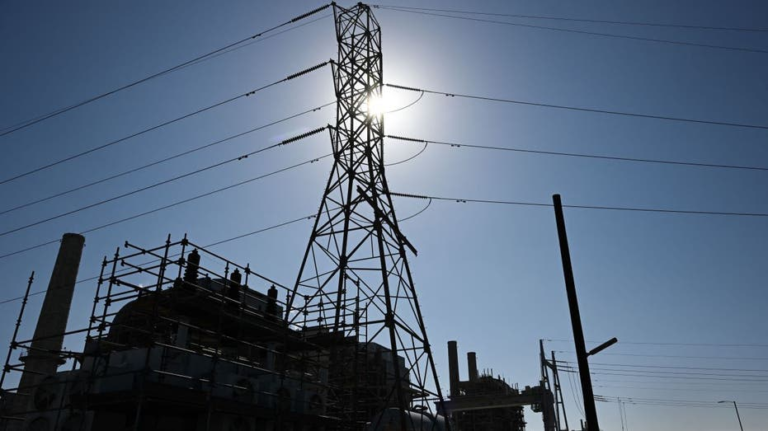

Sustainable AI: The Environmental Impact of Global Data Centers in 2026, The artificial intelligence revolution has an invisible price tag. While the world marvels at what AI can do—generating videos from text, diagnosing diseases, writing code—a massive physical infrastructure is expanding at breakneck speed beneath the surface. Data centers are the factories of the digital age, and like the factories of the Industrial Revolution, they come with profound environmental consequences.

In 2026, these consequences are becoming impossible to ignore. From local “heat islands” that raise surrounding temperatures by up to 9°C to global electricity consumption rivaling small nations, the environmental footprint of AI is emerging as one of the defining sustainability challenges of our time. This guide examines the scale of the impact, the innovations aimed at mitigating it, and what a truly sustainable AI future might look like.

1. The Hidden Environmental Costs of AI

When you ask an AI model a question, it feels weightless—a few electrons moving through the cloud. But behind that interface lies a physical infrastructure of staggering proportions.

The Scale of the Infrastructure

Global AI spending is projected to reach $2.5 trillion in 2026, with 40 percent of enterprise applications now embedding AI technology . This demand has driven the construction of massive facilities known as “hyperscale” data centers—each housing thousands of servers and spanning over 1 million square feet (roughly 13 football fields) .

The scale is accelerating. Currently, there are approximately 30 gigawatt (GW) campuses worldwide, but that figure is expected to rise to over 75 by 2030 . A single GW campus can consume up to 10 billion kilowatt-hours (kWh) of electricity annually—equivalent to the consumption of a city with one million residents .

To put this in perspective, data centers accounted for approximately 1.5% of global power demand in 2020, and this share is growing rapidly as the AI revolution accelerates .

The “Data Center Heat Island” Effect

Perhaps the most striking finding of 2026 research is the localized temperature impact of AI infrastructure. A study by the University of Cambridge’s Earth Observation team, analyzing 20 years of satellite temperature data, has documented a phenomenon researchers call the “data heat island effect” .

Key Findings:

| Impact Metric | Finding |

|---|---|

| Average temperature increase | 2°C (3.6°F) around data centers |

| Extreme temperature increase | Up to 9.1°C (16.4°F) in some locations |

| Geographic reach | Effects extend up to 10 km (6.2 miles) from facilities |

| Population affected | Over 340 million people worldwide |

The researchers examined more than 6,000 data centers located away from dense urban areas to isolate the effect from other heat sources like manufacturing or residential heating. By filtering out seasonal variations and global warming trends, they identified clear temperature rises following data center operations .

Regional Examples:

- Bajio region, Mexico: A growing data center hub has experienced approximately 2°C (3.6°F) of unexplained warming over two decades .

- Aragon, Spain: A European AI data center hub shows similar warming not observed in neighboring provinces .

- U.S. West Coast: One AI computing cluster recorded up to 9°C of localized warming .

- Southwest China: A data center cluster recorded 5°C of warming .

- Western Europe: A cluster recorded 4.2°C of warming .

The mechanism is straightforward: data centers consume enormous electricity, and much of that energy is ultimately released as heat. Server racks generate significant thermal output, and cooling systems add additional heat as they work to remove it . This waste heat accumulates locally, creating persistent thermal anomalies.

Water Consumption: The Hidden Footprint

Water is another critical resource under pressure. Data center cooling systems can consume vast amounts of water—particularly in regions already facing water stress.

The Climate Neutral Data Centre Pact has established a water usage efficiency trajectory, aiming to reduce consumption in water-stressed sites from approximately 1.8 L/kWh to 0.4 L/kWh by 2040 . While achievable, this requires significant innovation in cooling technology and water management.

The challenge is compounded by the fact that water consumption is often indirect. Even data centers that use no water on-site may consume significant water indirectly through electricity generation, particularly in regions that rely on hydropower or thermal power plants with evaporative cooling .

2. The Energy Challenge: Powering the AI Revolution

Energy consumption is the most visible environmental impact of AI data centers—and the most urgent to address.

The Gigawatt Era

The scale of new AI infrastructure represents a qualitative shift. In 2026, data center developers are no longer planning individual buildings but entire “power-anchored” industrial complexes.

SoftBank’s Ohio Project: The most dramatic example is SoftBank’s planned 10 GW AI data center campus at the former Portsmouth Gaseous Diffusion Plant in Pike County, Ohio. This facility will pair 10 GW of computing capacity with 9.2 GW of natural gas generation—making it one of the largest integrated power-and-compute developments in the world .

The project’s scale forces a fundamental rethinking of how AI infrastructure is conceived. Rather than adapting to existing grid capacity, developers are building generation, transmission, and compute in parallel as a coordinated system. This includes:

- $4.2 billion in new 765-kV transmission lines (a voltage class capable of carrying significantly more capacity than standard 345-kV lines)

- Four new substations

- Interstate gas pipeline development

- Coordinated deployment across federal land leased from the U.S. Department of Energy

The reliance on natural gas reflects current constraints: dispatchable gas generation remains one of the few options capable of delivering multi-gigawatt capacity within the timeframes AI developers are targeting . While nuclear power and renewables attract interest, their deployment timelines remain extended.

Carbon Emissions and Climate Targets

The carbon footprint of AI data centers is substantial. According to the Science Based Targets Initiative (SBTi), the technology sector must cut greenhouse emissions by 42% by 2030 (against a 2015 baseline) to achieve net zero and limit global warming to 1.5°C .

Data centers currently consume 10-50 times more energy per square foot than a commercial office building, making them a critical focus for decarbonization . The combined energy use of cooling and power conditioning can be substantial: in large-scale efficient data centers, cooling uses 10–20% as much energy as IT equipment itself; in smaller, less efficient facilities, cooling can use 80–120% as much electricity as the IT equipment .

The “Tokens Per Watt” Metric

As the industry evolves, so do its efficiency metrics. Traditional measures like Power Usage Effectiveness (PUE)—the ratio of total facility energy to IT equipment energy—are being supplemented by a more meaningful metric: “tokens per watt” .

This shift reflects a fundamental change in how value is measured. PUE only tells us how efficiently power is delivered to servers. “Tokens per watt” tells us how much actual AI output is produced from each unit of energy—focusing on the productive yield rather than just the efficiency of delivery .

3. The Innovation Response: Sustainable AI Infrastructure

The environmental challenges of AI are not being ignored. 2026 has seen significant innovations in how data centers are designed, built, and operated.

Cooling Technology Revolution

Cooling represents one of the largest opportunities for impact reduction. A landmark life cycle assessment conducted by WSP and Microsoft, published in Nature, quantified the benefits of advanced cooling technologies .

Key Findings:

| Cooling Technology | GHG Reduction | Energy Reduction | Water Reduction |

|---|---|---|---|

| Liquid cooling (overall) | 15-21% | 15-20% | 31-52% |

| Cold plate | Significant | Significant | Most efficient |

| One-phase immersion | Significant | Significant | Moderate |

| Two-phase immersion | Significant | Significant | Higher water impact |

For a typical hyperscale facility, these reductions are equivalent to removing 4,500 cars from U.S. roads annually, powering 22,200 U.S. homes, or saving 20 Olympic swimming pools of water .

The research revealed three critical lessons:

Lesson 1: Evaluate beyond carbon. Water consumption requires equal attention. Some cooling technologies that use no water on-site may actually increase overall water consumption through higher electricity use, depending on the grid’s water intensity .

Lesson 2: Renewable energy is essential but not sufficient. Switching to renewable energy can reduce operational GHG emissions by 85-90%, regardless of cooling technology. However, embodied emissions from hardware and infrastructure remain significant—and require attention across the supply chain .

Lesson 3: Building impacts matter at scale. While the per-virtual-core impact of buildings is small, the sheer volume of new construction means that embodied carbon from materials like concrete and steel must be addressed. A separate life cycle assessment by Data4 and APL Data Center found that equipment and materials production accounts for 39% of a data center’s carbon footprint over 20 years, nearly as significant as operations (48%) .

AI-Optimized Operations

Ironically, AI itself is becoming a critical tool for reducing the environmental impact of data centers. Machine learning algorithms now optimize:

- Airflow and cooling systems in real-time

- Pump speeds and power distribution

- Workload scheduling to shift compute to times and locations with surplus renewable energy

- Predictive maintenance to prevent failures and optimize equipment lifespan

Huawei’s AI-Powered Green Site solution, launched at MWC 2026, exemplifies this approach. The system uses intelligent algorithms integrating weather forecasts, grid availability, and load predictions to coordinate solar, energy storage, and diesel systems. In South Africa, this solution has reduced fuel consumption by 75%, saving over $10,000 per site annually and cutting carbon emissions by 18 tons .

Circular Economy and Materials Innovation

The embodied carbon of data centers is receiving increased attention. Key innovations include:

- Low-carbon concrete and geopolymer alternatives

- Modular timber structures for appropriate architectural elements

- Design for disassembly, enabling material recovery during refresh cycles

- Certified recycled steel and reclaimed metals

Data4 has implemented these principles through its “Data4Good” program, incorporating low-carbon concrete, renewable energy power purchase agreements, and waterless cooling systems that are 25 times more efficient than industry average .

Heat Recovery and Grid Services

Data centers are increasingly being designed not just as consumers but as contributors to local energy systems.

Heat Recovery: Waste heat from data centers can warm nearby buildings, supporting district heating networks. WSP is currently working on a project in Manhattan to supply heat from two data centers to a thermal energy network .

Grid Flexibility: Energy storage systems at data centers can participate in frequency response and demand shifting, helping stabilize the grid. Huawei reports that in Northern Europe, this approach has generated €2,000 per site annually for operators .

4. The Policy Response: Governance for Sustainable AI

As the environmental impacts of AI data centers become clearer, governments are beginning to respond.

European Union Leadership

The European Union has emerged as the most proactive regulator. Following the 2025 Global Data Center Environmental Guidelines, the EU launched an AI infrastructure green upgrade program focused on :

- Promoting efficient low-carbon cooling technologies

- Establishing spatial planning standards for data center density

- Increasing R&D investment in green technology

- Creating support funds for low-power cooling solutions

The EU’s approach emphasizes systemic planning rather than reactive mitigation—designing AI infrastructure as an integrated part of sustainable development rather than an afterthought.

United States: Federal Land and Strategic Infrastructure

In the United States, the Department of Energy has taken a different approach—using federal land as a platform for coordinated AI infrastructure development. The Portsmouth project in Ohio, located on former uranium enrichment site, is explicitly framed as a national security asset: helping the United States “win the AI race” .

This approach has significant environmental implications. By controlling land, transmission, and generation as an integrated system, federal agencies can enforce sustainability requirements that would be difficult to mandate in purely private development.

The Cost Allocation Debate

One of the most contentious issues is who pays for the grid upgrades required to serve AI-scale loads. Utilities, regulators, consumer advocates, and developers are divided:

- Developers point to economic development benefits

- Consumer advocates counter that residential ratepayers should not subsidize infrastructure for hyperscale demand

The Ohio project offers a potential model: SB Energy has committed to funding $4.2 billion in transmission infrastructure directly, rather than passing costs through to ratepayers . This structure, if validated, could influence how utilities and regulators across the U.S. handle cost allocation for AI infrastructure.

5. The Future: A Sustainable Path Forward

The environmental trajectory of AI is not predetermined. Several developments could reshape the industry’s footprint over the coming decade.

Technological Trajectories

Liquid Cooling Standardization: Liquid cooling is transitioning from a specialized solution to standard practice, with focus shifting to interoperability and standardized controls . As adoption scales, costs will decrease, making advanced cooling accessible to a broader range of operators.

Modular and Industrialized Delivery: Data center development is becoming industrialized, with standardized power and cooling blocks that can be deployed rapidly across regions. This shift enables quality control and sustainability features to be “baked in” rather than retrofitted .

Small Modular Nuclear Reactors (SMRs): While not yet operational at scale, SMRs remain a long-term option for carbon-free baseload power. Some operators are repurposing decommissioned nuclear sites, recognizing that former nuclear locations offer the safest and most practical bases for next-generation reactors .

Hydrogen and Long-Duration Storage: Hydrogen fuel cells and advanced storage systems could provide the firm, dispatchable power that AI workloads require without fossil fuel emissions. These technologies remain in development but are moving steadily from concept toward feasibility .

Design Principles for Sustainable AI

Experts at Arup have outlined a vision for data centers as “good neighbors”—facilities that contribute positively to their communities rather than simply consuming resources .

The “Good Neighbor” Framework:

| Domain | Principle | Example |

|---|---|---|

| Energy | From load to flexible, clean, locally useful power | Co-located renewables, grid services, waste-heat recovery |

| Water | Stewardship by design | Closed-loop systems, non-potable sources, zero-freshwater ambitions |

| Materials | Circular recovery | Design for disassembly, reclaimed metals, traceable components |

| Community | Early, transparent, beneficial engagement | STEM education funding, jobs, shared infrastructure benefits |

This framework represents a fundamental shift: from data centers as “black boxes” that consume resources to data centers as integrated components of sustainable communities.

The Call for Systemic Thinking

The Cambridge research team’s lead author, Andrea Marinoni, emphasizes that we are at a critical juncture: “There still might be time to consider the possibility of a different path … without affecting the demand of AI and its ability to provide progress for mankind” .

Deborah Andrews, emeritus professor at London South Bank University, offers a sobering assessment: “The ‘rush for AI-gold’ appears to be overriding good practice and systemic thinking … and is developing far more rapidly than any broader, more sustainable systems” .

6. What You Can Do: A Call to Action

For organizations and individuals using AI, the environmental impact is not someone else’s problem. Here are practical steps to contribute to a more sustainable AI future.

For Organizations

1. Choose Sustainable Providers. Ask cloud and AI service providers about their sustainability practices. What is their PUE? What percentage of energy is renewable? What cooling technologies do they use? Do they have heat recovery programs?

2. Optimize Workload Efficiency. Not all AI tasks require the most powerful models. Match model size to task complexity. Schedule non-time-critical training during periods of renewable energy surplus. Delete unused models and datasets.

3. Demand Transparency. Support providers who disclose their environmental impact. Data4’s publication of a comprehensive life cycle assessment sets a new standard for transparency—encourage your providers to follow suit .

4. Consider Embodied Carbon. When procuring hardware or cloud services, consider not just operational efficiency but the embodied carbon of the infrastructure supporting your work.

For Developers and Engineers

1. Design for Efficiency. Implement AI-powered operations management. Use digital twins to optimize performance. Design for maintainability and adaptability—systems that can evolve with changing conditions.

2. Prioritize Water Stewardship. In water-stressed regions, prioritize closed-loop cooling and non-potable water sources. Consider the water intensity of your electricity supply.

3. Embrace Standardization. Support industry efforts to standardize cooling interfaces, monitoring systems, and reporting frameworks. Interoperability accelerates innovation.

For Policymakers

1. Require Transparency. Mandate environmental impact disclosure for data centers, including PUE, WUE, carbon intensity, and heat recovery.

2. Align Incentives. Design tax structures and grid interconnection policies that reward sustainability rather than simply throughput.

3. Coordinate Land Use Planning. Treat AI infrastructure as a strategic resource requiring integrated planning for energy, water, and community impacts.

4. Invest in R&D. Support development of advanced cooling technologies, long-duration storage, and low-carbon materials for data center construction.

Conclusion: The Choice Before Us

The AI revolution is not inherently unsustainable. The environmental impacts of data centers—heat islands, water consumption, carbon emissions—are problems of design and policy, not inevitabilities of technology.

The innovations profiled in this guide demonstrate that a different path is possible. Liquid cooling can reduce water consumption by half. AI-optimized operations can cut fuel consumption by 75%. Heat recovery can transform data centers from energy consumers into community assets. Renewable energy can reduce operational emissions by 90%.

But these innovations require intentional adoption. They require transparency to measure what matters. They require systemic thinking rather than the “rush for AI-gold.” And they require accountability—from the companies building AI infrastructure, from the governments regulating it, and from the users whose demand drives its growth.

The next decade will determine whether AI becomes a contributor to climate solutions or an accelerant of climate crisis. The technology is not the problem—the choices we make about how to deploy it will determine the outcome.

We still have time to choose the sustainable path. But that window is closing.