Sora 2 and the Future of AI Video: What Content Creators Need to Know

Sora 2 and the Future of AI Video: What Content Creators Need to Know in 2026, The landscape of video creation has shifted seismically. In early 2026, OpenAI released Sora 2, the successor to what was already considered the gold standard in AI video generation. But Sora 2 isn’t just an incremental upgrade—it represents a fundamental rethinking of how AI can assist in the creative process.

For content creators, this moment feels eerily familiar. It echoes the release of GPT-3 in 2020, which transformed written content creation. Now, video—the most engaging, most time-consuming, and often most expensive medium—is having its ChatGPT moment.

This guide explores what Sora 2 can do, how it compares to other AI video tools, and—most importantly—what content creators need to know to navigate the rapidly evolving landscape of AI-generated video in 2026.

What Is Sora 2?

Sora 2 is OpenAI’s advanced AI video generation model, capable of creating realistic and imaginative video scenes from text descriptions, images, or existing video clips . Unlike earlier models that produced short, glitchy clips with obvious artifacts, Sora 2 generates cinematic-quality footage with remarkable physical realism and temporal consistency.

The model is available through OpenAI’s API and is integrated into Microsoft Azure’s OpenAI Service, making it accessible to developers and creators at scale .

Key Capabilities at a Glance

| Capability | Details |

|---|---|

| Maximum Resolution | Up to 4K (3840×2160) with sora-2-pro model |

| Maximum Duration | Up to 20 seconds per clip (60 seconds with Pro plan) |

| Input Types | Text-only, image + text, video + text |

| Audio Generation | Native audio output with video |

| Character References | Upload characters once; reuse consistently across videos |

| Remix Feature | Targeted edits to existing videos without full regeneration |

| Video Extension | Extend existing clips using full context, not just last frame |

Model Variants

OpenAI offers two primary versions of Sora 2:

- Sora 2: Supports 720×1280 and 1280×720 resolutions. Ideal for standard content creation.

- Sora 2 Pro: Supports higher resolutions including 1024×1792, 1792×1024, 1080×1920, and 1920×1080. Delivers enhanced detail and texture accuracy .

What’s New in Sora 2?

The leap from the original Sora to Sora 2 is substantial. Here are the most significant improvements:

1. Character Consistency

One of the biggest challenges in AI video has been maintaining consistent characters across different shots. Sora 2 introduces character references—upload a short reference clip once, and the model can reuse that character across multiple videos with consistent appearance .

This feature opens up possibilities for episodic content, brand mascots, and narrative storytelling that were previously impossible with AI video tools.

2. Extended Duration

Sora 2 now supports clips up to 20 seconds per generation, with Pro plan users accessing 60-second videos . While this may seem modest compared to traditional video, consider the implications: a 60-second fully AI-generated commercial, a 20-second product demo, or a multi-shot narrative sequence—all without cameras, actors, or sets.

3. Native Audio Generation

Unlike earlier models that required separate audio tools, Sora 2 generates synchronized audio directly with video output . The model can produce diegetic sound—audio that originates from within the scene—including dialogue, ambient noise, and sound effects that match the visual action.

4. Remix and Edit Capabilities

Sora 2 introduces the ability to remix existing videos through targeted adjustments rather than starting from scratch . This allows creators to iterate on generated content—adjusting lighting, modifying actions, or refining details—without regenerating the entire clip.

5. Video Extension

Instead of generating clips in isolation, Sora 2 can extend existing videos using the full original clip as context, not just the last frame . This enables the creation of longer, coherent sequences that maintain narrative and visual continuity.

How Sora 2 Compares to Competitors

Sora 2 isn’t the only AI video generator in 2026. Understanding how it stacks up against alternatives helps creators choose the right tool for their needs.

Quick Comparison

| Platform | Max Resolution | Max Length | Starting Price | Best For |

|---|---|---|---|---|

| Sora 2 | 4K | 60 seconds | $29.99/month | Cinematic quality, storytelling, physics realism |

| Runway Gen-4 | 1080p | 16 seconds | $15/month | Creative professionals, multi-scene consistency |

| Pika Labs 2.5 | 1080p | 10 seconds | Free/$8/month | Social media, effects, fast iteration |

| Kling 2.1 | 1080p | 10 seconds | $6.99/month | Photorealistic video, lip-sync |

| Google Veo 3 | 4K | 30 seconds | $0.15-$0.40/second | Premium production, advanced motion |

Sora 2 vs. Runway Gen-4

Runway Gen-4 excels at character consistency across shots and offers powerful integrated editing tools . It’s ideal for creative professionals who want to maintain a consistent aesthetic across multiple scenes.

Sora 2, however, leads in photorealism and physics understanding. The steam rising from a coffee cup, reflections in glass, and natural human movement are noticeably more convincing in Sora 2 outputs . For creators prioritizing realism over stylization, Sora 2 is the superior choice.

Sora 2 vs. Pika Labs 2.5

Pika Labs positions itself as the accessible, budget-friendly option. With a free tier and fast generation times (under 2 minutes), it’s ideal for social media creators who need quick, effects-heavy content .

Sora 2 targets the premium market. Higher price points deliver significantly better quality, but the investment only makes sense for creators who need cinematic-grade output.

The Hybrid Approach

Many professional creators in 2026 use multiple tools strategically :

- Sora 2 for hero shots, establishing scenes, and high-quality sequences

- Runway Gen-4 for maintaining consistency across character-driven narratives

- Pika Labs for quick iterations and social media content

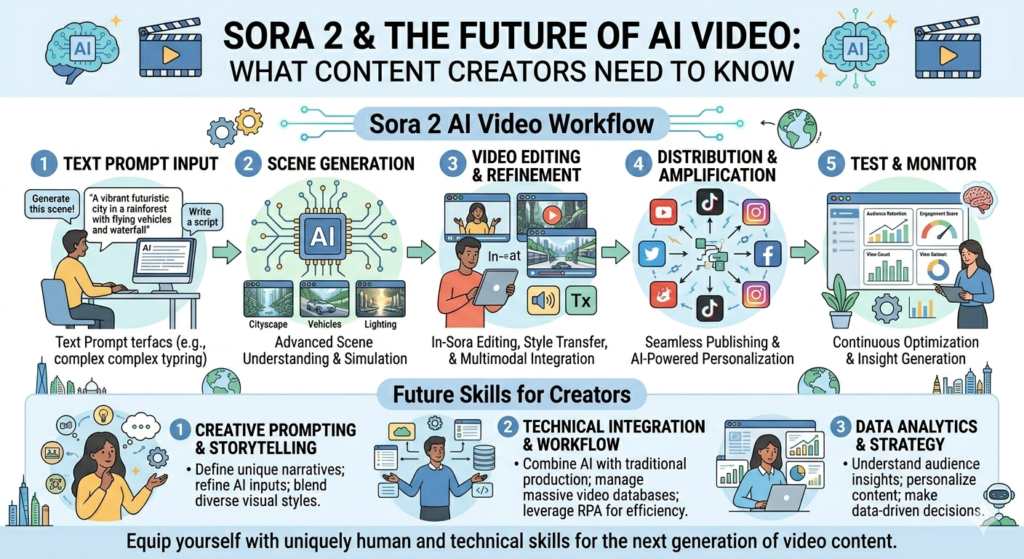

The Prompting Craft: Getting the Most from Sora 2

Unlike text generation, video prompting requires thinking like a cinematographer. OpenAI’s official prompting guide provides specific recommendations for achieving professional results .

The Philosophy: Creative Wish List, Not Contract

Think of prompting like briefing a cinematographer who has never seen your storyboard. If you leave out details, they’ll improvise—which may or may not align with your vision .

Key principle: Shorter prompts give the model more creative freedom (expect surprising results). Longer, more detailed prompts restrict creativity but offer more control .

What Must Be Set in API Parameters

Certain attributes cannot be requested in prose—they must be set explicitly in your API call :

- model:

sora-2orsora-2-pro - size: resolution in {width}x{height} format

- seconds: clip length (4, 8, 12, 16, or 20 seconds)

- characters: references to uploaded character IDs

Prompt Structure That Works

A clear prompt should describe a shot as if you were sketching it onto a storyboard :

- Camera framing: Close-up? Wide shot? Eye level? Low angle?

- Depth of field: Shallow focus or deep focus?

- Action beats: Describe movement in discrete steps

- Lighting and palette: Time of day, color temperature, mood

- Style cues: “1970s film,” “IMAX-scale scene,” “16mm black-and-white”

Weak vs. Strong Prompts

| Weak Prompt | Strong Prompt |

|---|---|

| “A beautiful street at night” | “Wet asphalt, zebra crosswalk, neon signs reflecting in puddles” |

| “Person moves quickly” | “Cyclist pedals three times, brakes, and stops at crosswalk” |

| “Cinematic look” | “Anamorphic 2.0x lens, shallow DOF, volumetric light” |

Ultra-Detailed Professional Prompts

For complex cinematic shots, you can specify professional production parameters :

- Format & Look: Duration, shutter angle, film stock emulation, grain

- Lenses & Filtration: Focal length, lens type, filtration

- Grade & Palette: Highlight treatment, mid-tone balance, shadow characteristics

- Lighting & Atmosphere: Light direction, bounce fill, practical lights, atmospheric effects

- Location & Framing: Foreground, midground, background details

- Sound: Diegetic audio description, ambient levels

Practical Tips from OpenAI

- Use the same prompt multiple times—each generation is a fresh take; sometimes the second or third option is better

- Shorter clips work better: Stitching two 4-second clips in editing often outperforms a single 8-second generation

- Be prepared to iterate: Small changes to camera, lighting, or action can shift outcomes dramatically

The Real Revolution: AI Editing Agents

While Sora 2 captures headlines for generation, industry experts—including a16z partner Justine Moore—argue that the true revolution is happening elsewhere: in the editing layer .

The 80/20 Rule of Video Production

Video creation follows a brutal 80/20 rule: 80% of time is spent on editing, 20% on shooting (or generating). The editing process involves :

- Sorting through hours of footage

- Making thousands of micro-decisions about pacing and rhythm

- Correcting lighting and audio

- Adding sound effects and music

- Adapting content for multiple platforms

Enter the AI Editing Agent

Moore’s insight is that three conditions have simultaneously matured :

- Visual models now understand content semantics and narrative structure

- Tool-using agents can execute editing operations, not just suggest them

- Generation quality has crossed the threshold for practical use

The result? AI agents that act as an “invisible post-production team” —handling the “dirty and tiresome work” that has traditionally consumed creators’ time .

What AI Editing Agents Can Do

| Task | Example Tools |

|---|---|

| Process management | Eddie AI processes hours of footage, identifies main shots, handles multi-camera angles |

| Multi-model orchestration | Glif coordinates image generation, video models, and stitching |

| Detail refinement | Descript’s Underlord adjusts lighting, removes audio noise, deletes filler words |

| Format adaptation | Overlap creates platform-specific cuts with node-based workflows |

| Taste optimization | Future agents will generate multiple edit drafts based on creative feedback |

The Emma Chamberlain Example

YouTuber Emma Chamberlain famously spent 30 to 40 hours editing a 15-minute vlog . Imagine an AI agent that:

- Watches your raw footage

- Asks about your goals

- Generates several edit drafts

- Takes feedback: “The beginning is too slow,” “Cut the middle part,” “Make the ending more impactful”

This isn’t speculative—the technology is already in place .

Industry Impact: AI Video in the Real World

The AI video revolution isn’t coming—it’s already reshaping industries. Perhaps no sector illustrates this more dramatically than short-form drama production.

The Short Drama Industry Transformation

In early 2026, ByteDance (TikTok’s parent company) released Seedance 2.0, an AI video model that addresses previously insurmountable challenges like character consistency and scene stability . The impact has been immediate and profound.

By the numbers:

- January 2026: 14,634 AI short dramas launched in China—over 470 new shows per day

- AI content now accounts for 38% of short drama rankings, up from just 7% the previous year

- Production costs have dropped to one-tenth of traditional live-action budgets

- Production timelines have compressed from months to days

The Economics Are Compelling

| Traditional Live-Action | AI-Generated |

|---|---|

| Budget: ~$60,000 minimum | Budget: $5,000–$10,000 |

| Timeline: 2–3 months | Timeline: Days |

| Cast: Actors, crew, extras | Cast: AI actors |

| Locations: Sets, permits | Locations: Generated |

Platforms like Douyin (Chinese TikTok) have responded with significant incentive programs, offering $360,000+ for top-tier AI short dramas .

Who Gets Disrupted?

The shift has immediate consequences for industry roles :

Roles most at risk:

- Background actors and extras

- Standardized production crew

- Entry-level editors and post-production staff

Roles gaining importance:

- Writers with strong storytelling ability

- Creative directors with aesthetic judgment

- AI tool operators and prompt engineers

- Producers who can orchestrate AI workflows

As one industry observer put it: “The roles being eliminated are not creators—they’re the standardized execution roles that previously served as entry points into the industry” .

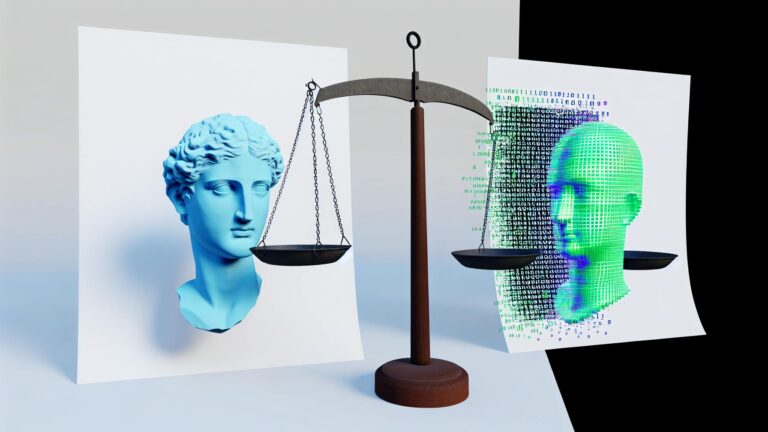

The Legal and Ethical Challenges

The explosion of AI-generated video has outpaced legal frameworks, creating significant risks for creators and platforms.

The Portrayal Crisis

In March 2026, multiple AI short dramas faced backlash for characters bearing strong resemblance to real celebrities .

Case 1: AI short drama Reborn, I Become Mother’s Guardian featured a character that viewers identified as resembling actor Yang Zi. The production company claimed the image was “randomly generated” .

Case 2: Beijing Wind and Cloud featured a protagonist resembling actor Xiao Zhan. The production attempted to evade responsibility by renaming the character “Xiao Zhan” (a homophone) and blurring the character’s face before eventual removal .

Case 3: Media company Yaoke announced new AI digital actors—only to face public backlash over their resemblance to multiple real performers .

Legal Principles

According to legal experts cited in the Securities Daily report :

“Even if production companies claim images are ‘randomly generated’ or ‘composited,’ they are not automatically exempt from liability. The law focuses on whether the result causes confusion or misidentification—not the technical randomness of the process.”

Key legal principles:

- Portrait rights extend to AI-generated images that evoke specific celebrities

- Platform liability extends beyond “notice and takedown” to proactive duty of care if platforms know or should know of infringing content

- Facial comparison tools make claims of accidental resemblance increasingly implausible

Platform Responsibility

Under China’s Civil Code Article 1197, platforms may bear joint liability if they fail to take necessary action when they know or should know of infringing content . This standard is likely to influence global platform practices as AI-generated content proliferates.

The Call for Governance

Industry experts advocate for a coordinated governance framework :

| Stakeholder | Responsibility |

|---|---|

| Producers | Ensure training data is legally sourced; verify generated images don’t infringe rights |

| Platforms | Move from reactive takedown to proactive review; implement screening tools |

| Technology providers | Embed legal compliance into model design; make infringement detection native |

| Regulators | Cross-agency coordination; industry self-regulation agreements |

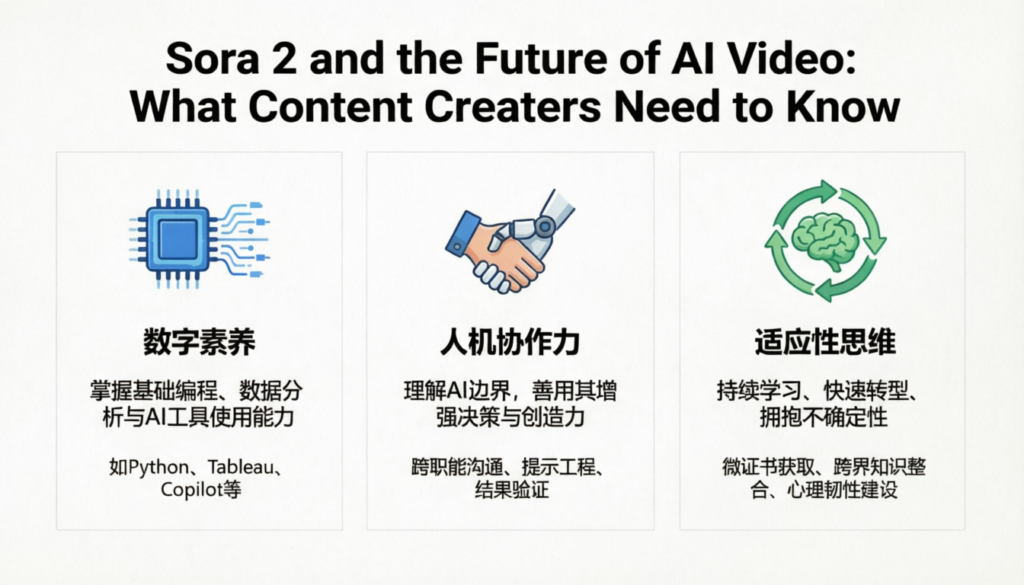

What Content Creators Need to Do Now

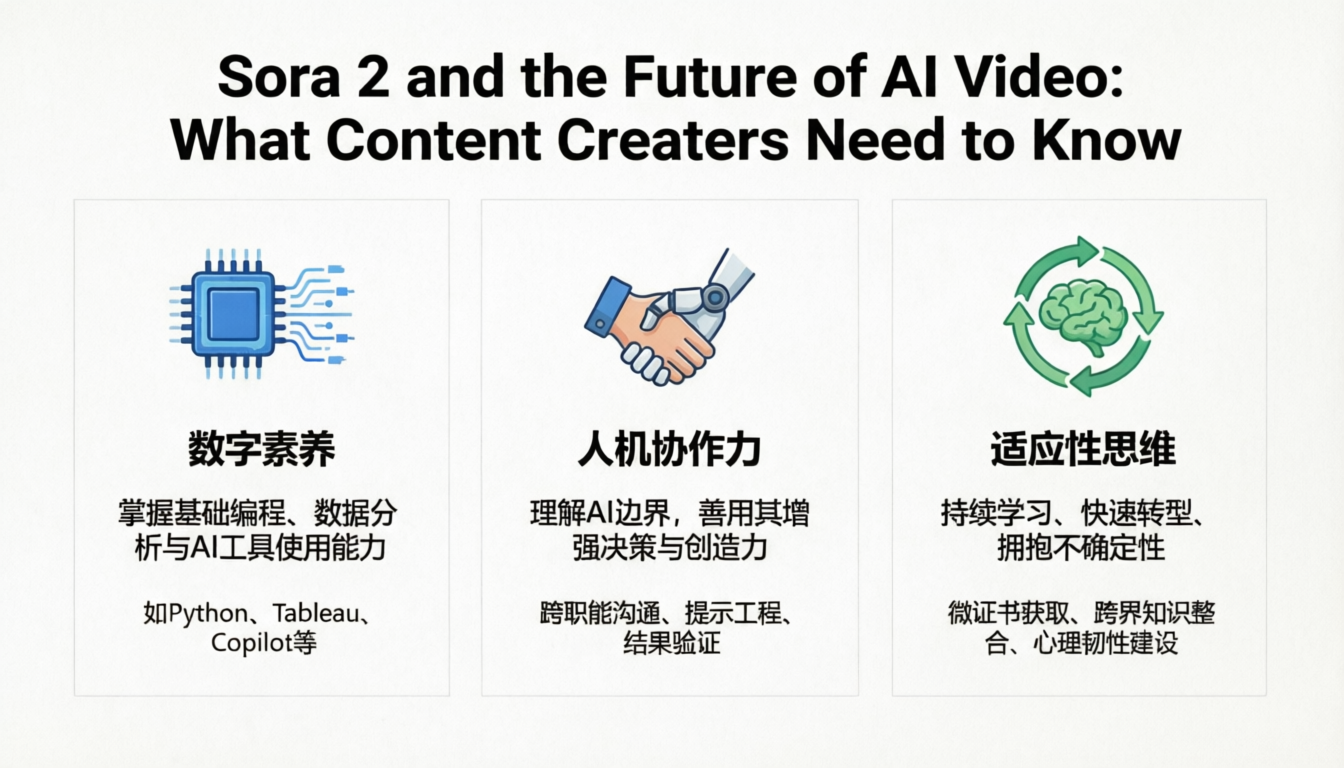

1. Develop AI Literacy

Understanding AI video tools is no longer optional. Spend time with Sora 2, Runway Gen-4, and Pika Labs to understand their capabilities and limitations. The gap between creators who embrace these tools and those who ignore them will widen rapidly.

2. Reframe Your Role

The creator’s role is shifting from hands-on execution to creative direction. Your value is increasingly in:

- Taste and judgment: Knowing what good looks like

- Storytelling: Structure, pacing, emotional arc

- Workflow design: Orchestrating AI tools efficiently

- Ethical oversight: Ensuring responsible use

3. Build a Hybrid Workflow

The most effective creators use multiple tools strategically :

- Sora 2 for high-quality hero shots

- Runway for character consistency across scenes

- AI editing agents for post-production

- Traditional tools for final polish and control

4. Document Your Process

As AI tools become more capable, the ability to reproduce results becomes valuable. Document prompts, settings, and workflows that deliver consistent quality.

5. Understand the Legal Landscape

- Never use AI-generated content depicting real people without consent

- Scrutinize generated characters for resemblance to celebrities

- Keep records of your generation process to demonstrate good faith

- Monitor platform policies—they’re evolving rapidly

6. Invest in What AI Can’t Replace

AI can generate visuals but cannot replicate:

- Authentic personal experience

- Genuine human connection with your audience

- Unique creative vision

- Ethical judgment and responsibility

The Future: What’s Next

2026–2027 Predictions

| Trend | Implication |

|---|---|

| AI editing agents mature | Post-production time drops by 80%; creators focus on creative direction |

| Character consistency improves | Episodic AI-generated content becomes viable |

| Regulatory frameworks emerge | Clearer rules for AI-generated likeness rights |

| Costs continue to drop | AI video becomes accessible to individual creators |

| Multi-modal integration | Text, image, video, and audio generation unify |

The Long View

Video is becoming the dominant medium for learning, marketing, and connection . The bottleneck has never been generation—it has been editing. With AI editing agents poised to remove that bottleneck, we are about to see an explosion of high-quality video content that was previously impossible to produce at scale.

For creators, this is both opportunity and challenge. The tools are democratizing, but the bar for quality is rising. Those who master the new workflow—combining AI generation with human taste, storytelling, and ethical judgment—will thrive.

Conclusion

Sora 2 represents a significant leap forward in AI video generation, but the real story is larger than any single model. We are witnessing the emergence of a complete AI-powered video production stack: generation tools like Sora 2, Runway, and Pika Labs; editing agents that handle post-production; and increasingly sophisticated workflows that combine them.

For content creators, the message is clear: learn these tools, understand their implications, and start building hybrid workflows. The future of video is not human versus machine—it’s human amplified by machine.

The question isn’t whether AI will transform video creation. That transformation is already underway. The question is whether you’ll be positioned to lead—or be left behind.